Ek Machine Learning engineer ke life mein wo moment zaroor aata hai jab wo excited hoke bolta hai: "Model training par 99% accuracy de raha hai!" — aur phir validation ya real data par sirf 60%.

Ye Overfitting hai. Aur ye shayad ML ki sabse common aur frustrating problem hai.

Aaj hum samjhenge:

- Underfitting aur Overfitting kya hain aur kaise detect karein

- Training/Validation curves padhna

- 8 practical solutions — code ke saath

- Kab kaunsa solution apply karein

Pehle Concept: Model Ka Goal Kya Hai?

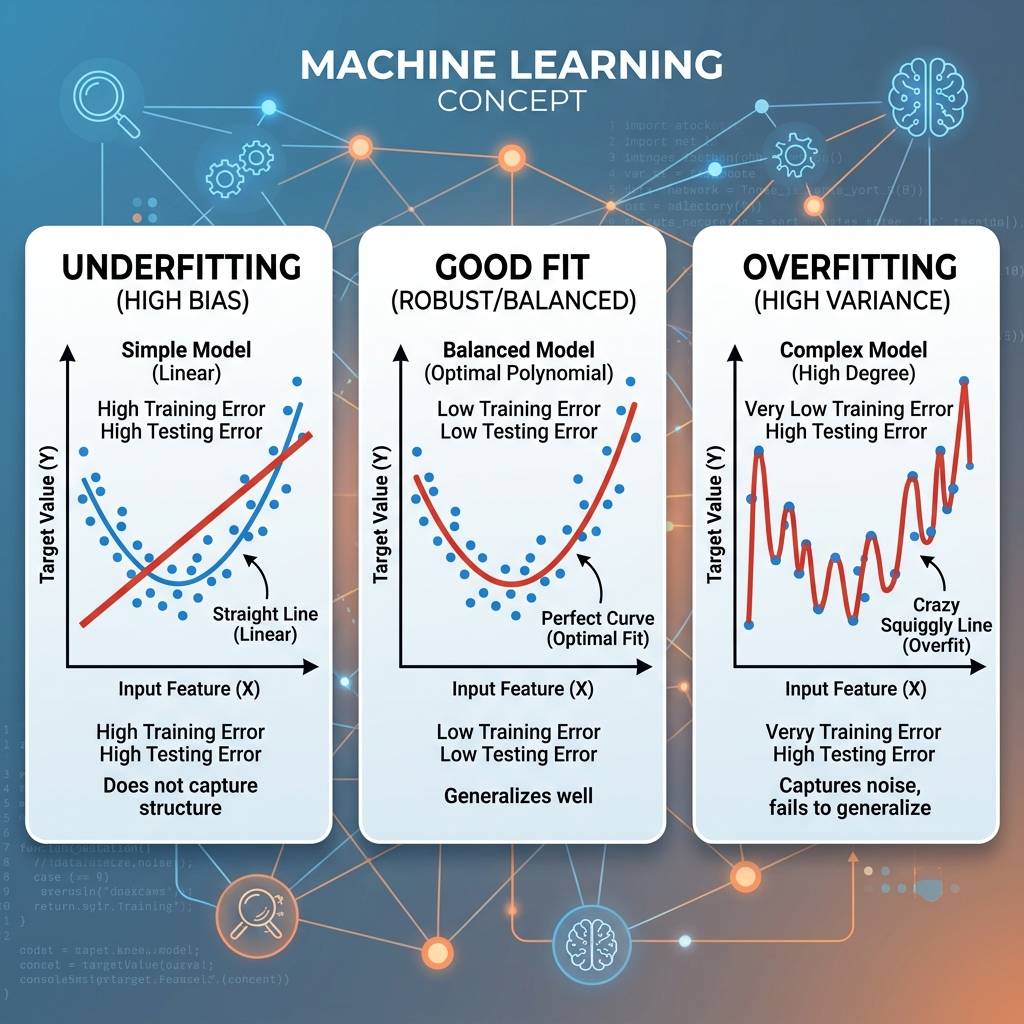

Machine Learning model ka asli goal ye nahi ki wo training data par accha kaam kare. Goal hai ki wo naaya, unseen data par accurately predict kare.

Training data par perfect performance = ye bhi galat ho sakta hai!

Training Data = Exam practice questions

Test Data = Actual exam (naaye questions)

Ek student:

❌ Jo sirf practice questions ratata hai → Exam mein fail (Overfitting)

❌ Jo kuch nahi padhta → Practice mein bhi fail (Underfitting)

✅ Jo concepts samajhta hai → Dono mein accha (Good Fit)

1. Underfitting: "Aalsi Model"

Kya Hota Hai?

Jab model data ke underlying patterns ko samajh hi nahi pata — na training mein, na testing mein. Model itna simple hai ki wo data ki complexity capture hi nahi kar sakta.

import numpy as np

import matplotlib.pyplot as plt

from sklearn.linear_model import LinearRegression

from sklearn.preprocessing import PolynomialFeatures

from sklearn.pipeline import Pipeline

# Non-linear data generate karo

np.random.seed(42)

X = np.linspace(-3, 3, 100).reshape(-1, 1)

y = 0.5 * X**3 - X**2 + 2*X + np.random.normal(0, 1, (100, 1))

# Underfitting model — sirf linear

underfitting_model = LinearRegression()

underfitting_model.fit(X, y)

# Ye curve ko follow nahi kar sakta — high bias

y_pred = underfitting_model.predict(X)

print(f"Underfitting R² score: {underfitting_model.score(X, y):.3f}") # Low score

Symptoms:

- Training accuracy bhi low

- Validation accuracy bhi low

- Both losses high

Kab hota hai:

- Model bahut simple hai (e.g., complex data par Linear Regression)

- Features bahut kam hain

- Data bahut kam hai

2. Overfitting: "Ratta Maar Model"

Kya Hota Hai?

Model training data ke har chhote-bade pattern aur noise ko memorize kar leta hai. Result: training par excellent, naye data par pathetic.

from sklearn.tree import DecisionTreeClassifier

from sklearn.datasets import make_classification

from sklearn.model_selection import train_test_split

from sklearn.metrics import accuracy_score

# Dataset banana

X, y = make_classification(n_samples=500, n_features=20, n_informative=5,

random_state=42, noise=0.3)

X_train, X_test, y_train, y_test = train_test_split(X, y, test_size=0.2, random_state=42)

# Overfitting model — infinite depth Decision Tree

overfit_model = DecisionTreeClassifier(max_depth=None, random_state=42)

overfit_model.fit(X_train, y_train)

train_acc = accuracy_score(y_train, overfit_model.predict(X_train))

test_acc = accuracy_score(y_test, overfit_model.predict(X_test))

print(f"Training Accuracy: {train_acc:.3f}") # 1.000 (100%!)

print(f"Testing Accuracy: {test_acc:.3f}") # 0.720 (only 72%)

print(f"Generalization Gap: {train_acc - test_acc:.3f}") # 0.28 — huge!

Symptoms:

- Training accuracy very high (95-100%)

- Validation/Test accuracy much lower

- Large gap between train and val loss

3. Perfect Fit: "Samajhdaar Model"

# Optimal model — appropriate complexity

good_model = DecisionTreeClassifier(max_depth=5, random_state=42) # Limited depth

good_model.fit(X_train, y_train)

train_acc = accuracy_score(y_train, good_model.predict(X_train))

test_acc = accuracy_score(y_test, good_model.predict(X_test))

print(f"Training Accuracy: {train_acc:.3f}") # ~0.85

print(f"Testing Accuracy: {test_acc:.3f}") # ~0.82 (close!)

print(f"Generalization Gap: {train_acc - test_acc:.3f}") # 0.03 — small gap

Training Curves: Kaise Diagnose Karein?

Ye charts aapko bata dete hain model ki actual health:

import matplotlib.pyplot as plt

import numpy as np

from sklearn.model_selection import learning_curve

from sklearn.tree import DecisionTreeClassifier

fig, axes = plt.subplots(1, 3, figsize=(18, 5))

models = {

'Underfitting\n(max_depth=1)': DecisionTreeClassifier(max_depth=1),

'Good Fit\n(max_depth=5)': DecisionTreeClassifier(max_depth=5),

'Overfitting\n(max_depth=None)': DecisionTreeClassifier(max_depth=None)

}

for ax, (name, model) in zip(axes, models.items()):

train_sizes, train_scores, val_scores = learning_curve(

model, X, y, cv=5, n_jobs=-1,

train_sizes=np.linspace(0.1, 1.0, 10)

)

train_mean = train_scores.mean(axis=1)

val_mean = val_scores.mean(axis=1)

ax.plot(train_sizes, train_mean, 'b-o', label='Training', markersize=5)

ax.plot(train_sizes, val_mean, 'r-o', label='Validation', markersize=5)

ax.fill_between(train_sizes,

train_scores.min(axis=1), train_scores.max(axis=1), alpha=0.1, color='b')

ax.fill_between(train_sizes,

val_scores.min(axis=1), val_scores.max(axis=1), alpha=0.1, color='r')

ax.set_title(name, fontweight='bold')

ax.set_xlabel('Training Set Size')

ax.set_ylabel('Accuracy')

ax.legend()

ax.set_ylim(0.4, 1.05)

plt.tight_layout()

plt.savefig('learning_curves.png', dpi=150, bbox_inches='tight')

plt.show()

Padhna seekho:

- 🔴 Underfitting: Dono lines low hain, parallel

- 🟡 Good Fit: Lines close hain, validation reasonable hai

- 🔵 Overfitting: Training line high, Validation line neeche — bada gap

8 Solutions: Overfitting Fix Karo

Solution 1: Zyada Data Collect Karo

# Data augmentation (images ke liye)

from tensorflow.keras.preprocessing.image import ImageDataGenerator

augmented = ImageDataGenerator(

rotation_range=20,

width_shift_range=0.2,

horizontal_flip=True,

zoom_range=0.15

)

# Same images se artificially zyada training data

Solution 2: Dropout (Neural Networks)

import tensorflow as tf

model = tf.keras.Sequential([

tf.keras.layers.Dense(256, activation='relu'),

tf.keras.layers.Dropout(0.4), # 40% neurons randomly off — training only

tf.keras.layers.Dense(128, activation='relu'),

tf.keras.layers.Dropout(0.3),

tf.keras.layers.Dense(1, activation='sigmoid')

])

Solution 3: Regularization (L1/L2)

from sklearn.linear_model import Ridge, Lasso, LogisticRegression

# L2 Regularization (Ridge) — weights chhote karo

ridge = Ridge(alpha=1.0) # alpha = regularization strength

# L1 Regularization (Lasso) — kuch weights zero karo (feature selection)

lasso = Lasso(alpha=0.1)

# Neural network mein

tf.keras.layers.Dense(128, kernel_regularizer=tf.keras.regularizers.l2(0.01))

Solution 4: Early Stopping

from tensorflow.keras.callbacks import EarlyStopping

early_stop = EarlyStopping(

monitor='val_loss',

patience=10, # 10 epochs tak improve nahi → stop

restore_best_weights=True, # Best weights restore karo

min_delta=0.001 # Minimum improvement threshold

)

model.fit(X_train, y_train,

validation_split=0.2,

epochs=200, # Max epochs — early stopping handle karega

callbacks=[early_stop])

Solution 5: Cross-Validation

from sklearn.model_selection import cross_val_score, StratifiedKFold

kfold = StratifiedKFold(n_splits=5, shuffle=True, random_state=42)

cv_scores = cross_val_score(model, X, y, cv=kfold, scoring='accuracy')

print(f"CV Scores: {cv_scores}")

print(f"Mean: {cv_scores.mean():.3f} ± {cv_scores.std():.3f}")

# Zyada honest evaluation — single train/test split se better

Solution 6: Reduce Model Complexity

# Decision Tree ki depth limit karo

dt = DecisionTreeClassifier(

max_depth=5, # Limit

min_samples_leaf=10, # Leaf node mein minimum 10 samples

min_samples_split=20 # Split ke liye minimum 20 samples

)

# Random Forest se better regularization

from sklearn.ensemble import RandomForestClassifier

rf = RandomForestClassifier(n_estimators=100, max_depth=8, random_state=42)

Solution 7: Batch Normalization

model = tf.keras.Sequential([

tf.keras.layers.Dense(256),

tf.keras.layers.BatchNormalization(), # Normalize activations

tf.keras.layers.ReLU(),

tf.keras.layers.Dense(128),

tf.keras.layers.BatchNormalization(),

tf.keras.layers.ReLU(),

tf.keras.layers.Dense(1, activation='sigmoid')

])

Solution 8: Feature Selection

from sklearn.feature_selection import SelectKBest, f_classif

# Sirf top 10 features rakhna

selector = SelectKBest(f_classif, k=10)

X_selected = selector.fit_transform(X_train, y_train)

# Feature importance from Random Forest

rf.fit(X_train, y_train)

importances = rf.feature_importances_

# Low importance features drop karo

Quick Diagnosis Chart

| Symptom | Problem | Primary Fix |

|---|---|---|

| Train low, Val low | Underfitting | Complex model, more features |

| Train high, Val low | Overfitting | Dropout, regularization, data |

| Train-Val gap > 15% | Overfitting | Early stopping, cross-validation |

| Both losses stuck | Learning rate | Adjust LR, check data preprocessing |

| Validation loss increasing | Overfitting | Early stopping immediately |

FAQs

1. Overfitting aur High Variance mein kya fark hai? Wahi cheez — alag angle se dekh rahe ho. High Variance = model ka Overfitting behavior mathematical description hai.

2. Kya Dropout training ke baad bhi active rehta hai? Nahi! Sirf training mein. Inference par sab neurons active hote hain. Keras automatically handle karta hai.

3. Regularization kab use nahi karna chahiye? Agar data bahut zyada hai (millions of examples) aur model bilkul underfit nahi ho raha — regularization ki zaroorat kam hoti hai.

4. Cross-Validation bahut slow lagti hai — koi shortcut?

ShuffleSplit ya StratifiedShuffleSplit k-fold se faster hoti hai. Ya cross_val_score mein n_jobs=-1 lagao — parallel processing.

5. Neural network mein Dropout vs L2 — kab kaunsa? Dono saath use kar sakte ho. Typically: Dense layers ke baad Dropout, weights par L2. Convolutional layers mein Dropout se Batch Normalization better hoti hai.

Aapke model mein Overfitting aa rahi hai? Exact symptoms share karo — specific solution suggest karunga! 🔧

Tarun ke baare mein: Tarun ek AI educator hain jo Machine Learning ke practical debugging concepts ko hands-on code se explain karte hain. AI-Gyani par har problem ka solution real examples ke saath milta hai.