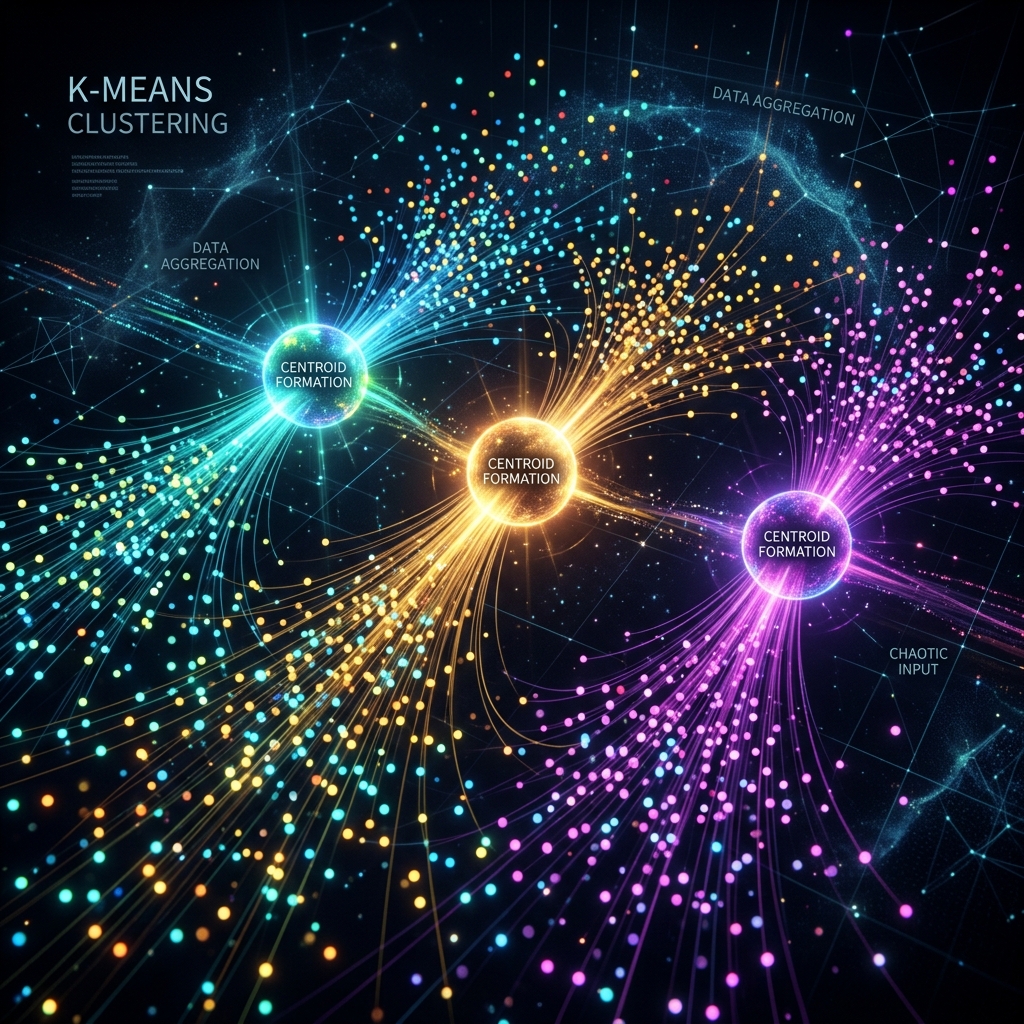

Sochiye aapke paas lakhon customers ka data hai par unka koi label nahi hai. Kaunsa customer zyada kharch karta hai? Kaunsa sirf discount dhoondhta hai? Ye sab janne ke liye hum Clustering use karte hain. K-Means iska sabse popular algorithm hai jo data ko "Similarities" ke basis par groups mein baant deta hai.

1. How K-Means Works: The 4 Steps

K-Means ek "Centroid-based" algorithm hai.

- Choose K: Sabse pehle decide karein ki kitne clusters chaiye (e.g., K=3).

- Initialize: Randomly 3 points (Centroids) rakhein.

- Assign: Har data point apne sabse nazdeek wale centroid ke paas chala jata hai.

- Update: Centroids move hote hain un clusters ke "Mean" center par. Ye tab tak chalta hai jab tak centroids hilna band na kar dein.

2. Choosing K: Elbow Method vs Silhouette Score

- Elbow Method: Hum K=1, 2, 3... try karte hain aur ek graph banate hain. Jahan graph "Elbow" (Kohni) ki tarah mudta hai, wo optimal K hai.

- Silhouette Score: Ye batata hai ki ek cluster ke points aapas mein kitne "Dense" hain aur doosre clusters se kitne "Door" hain. Score 1 ke jitna kareeb ho, utna accha cluster hai.

3. K-Means++: Initialization Magic

Pehle K-Means centroids ko randomly rakhta tha, jis se kabhi-kabhi galat clusters ban jate the.

- K-Means++ ek smart initialization technique hai jo centroids ko ek doosre se "Door" rakhti hai.

- Isse algorithm jaldi converge hota hai aur results 10x better aate hain.

4. Real World: Image Compression

K-Means sirf customer segmentation ke liye nahi hai.

- Maan lijiye ek image mein 16 million colors hain.

- K-Means se hum unhe 16 clusters mein baant sakte hain.

- Poori image sirf 16 colors mein dikhegi par file size 90% kam ho jayega.

5. Summary Table: Clustering Checklist

| Step | Action | Why? |

|---|---|---|

| Scaling | Use StandardScaler | Distance based algorithms need uniform scale |

| K-Selection | Elbow + Silhouette | To find natural number of groups |

| Initialization | k-means++ | To avoid local optima |

| Verification | Domain Knowledge | Clusters must make business sense |

FAQs

1. "Inertia" kya hota hai? Inertia batata hai ki cluster ke points apne centroid se kitne door hain. Kam inertia matlab acche clusters.

2. Outliers ka K-Means par kya asar hota hai? Bahut bada asar! Ek akela outlier centroids ko apni taraf khinch sakta hai, jis se poora cluster galat ho jayega. Clustering se pehle outliers ko hatana zaroori hai.

3. Kya K-Means categorical data par chalta hai? Nahi, kyonki ye "Mean" (Average) nikalta hai. Categorical data ke liye K-Modes use karte hain.

4. "Curse of Dimensionality" aur Clustering? Jab features bahut saare hote hain, toh distance meaningless ho jata hai. Clustering se pehle PCA use karna best practice hai.

Clustering un-labeled data se "Sona" (Insights) nikalne ka tareeqa hai. Ise master karke aap kisi bhi business ko optimize kar sakte hain! 📊

Tarun ke baare mein: Tarun unsupervised learning aur pattern recognition ke specialist hain. AI-Gyani par har cluster logical hai.