Pichli post mein humne Overfitting aur Underfitting dekha — practical symptoms aur fixes.

Ab dekhte hain iska mathematical foundation — Bias vs Variance Tradeoff. Ye concept ML ka core theory hai, aur har serious ML interview mein aata hai.

Dono posts alag hain: Overfitting/Underfitting behaviors describe karta hai. Bias/Variance explain karta hai kyon ye behaviors hote hain aur mathematically model error kahan se aata hai.

Total Error Decompose Karo

Machine Learning model ki total error teen sources se aati hai:

Total Error = Bias² + Variance + Irreducible Noise

Irreducible Noise: Data mein inherent randomness — isko koi bhi model eliminate nahi kar sakta (real world messy hai).

Bias aur Variance: Ye hum control kar sakte hain — aur yahan tradeoff hai.

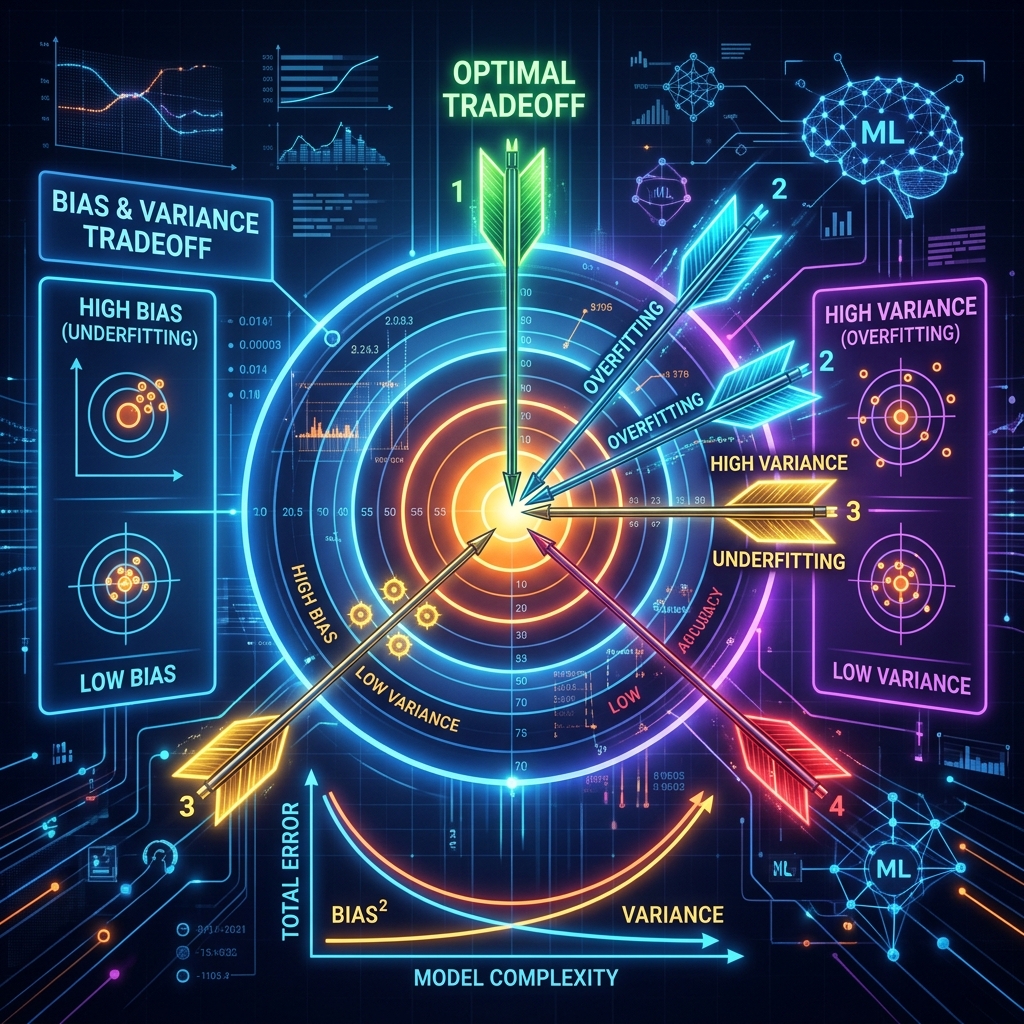

Bias Kya Hai? (Galat Assumption ki Galti)

Bias = Model ki systematic, consistent galti

Model ek assumption le leta hai jo data ke baare mein galat hai — aur consistently wahi galti karta rehta hai, chahe data kitna bhi dedo.

Archery Analogy

Socho aap teer chala rahe ho. High Bias = Har teer bullseye se bahut door — same side mein. Consistent hai par galat hai.

Target: O

Shots: × × × ← all far to the left, consistently

High Bias: Sab ek jagah hain, par galat jagah

Code: Bias Visualize Karo

import numpy as np

import matplotlib.pyplot as plt

from sklearn.linear_model import LinearRegression

from sklearn.preprocessing import PolynomialFeatures

from sklearn.pipeline import Pipeline

np.random.seed(42)

# True function: cubic (non-linear)

def true_function(X):

return 0.5 * X**3 - 2 * X**2 + X + 1

# Multiple small datasets (simulate different training runs)

n_datasets = 10

X_test = np.linspace(-3, 3, 200).reshape(-1, 1)

fig, axes = plt.subplots(1, 2, figsize=(14, 6))

for ax, (degree, title, color) in zip(

axes,

[(1, 'Linear (High Bias)', 'blue'), (9, 'Degree-9 (High Variance)', 'red')]

):

ax.plot(X_test, true_function(X_test), 'k-', linewidth=3, label='True Function', zorder=5)

predictions = []

for _ in range(n_datasets):

X_train = np.random.uniform(-3, 3, 20).reshape(-1, 1)

y_train = true_function(X_train) + np.random.normal(0, 2, X_train.shape)

model = Pipeline([

('poly', PolynomialFeatures(degree=degree)),

('reg', LinearRegression())

])

model.fit(X_train, y_train)

pred = model.predict(X_test)

predictions.append(pred)

ax.plot(X_test, pred, color=color, alpha=0.3, linewidth=1)

mean_pred = np.mean(predictions, axis=0)

ax.plot(X_test, mean_pred, '--', color=color, linewidth=2, label='Mean Prediction')

ax.set_title(title, fontweight='bold')

ax.legend()

ax.set_ylim(-30, 20)

plt.tight_layout()

plt.savefig('bias_variance_visualization.png', dpi=150, bbox_inches='tight')

plt.show()

Kya dikh raha hai:

- Linear model: sab predictions tightly clustered — par true function se door (High Bias)

- Degree-9 model: predictions har dataset par wild swing karte hain (High Variance)

Variance Kya Hai? (Training Data ke Saath Bahna)

Variance = Model ki predictions ka training data ke saath sensitivity

High variance model training data mein har cheez seekh leta hai — including random noise. Naya data aaya → predictions completely badal jaati hain.

Archery Analogy

High Variance = Teer kabhi left, kabhi right, kabhi top — bilkul random spread. Average sahi jagah ho sakta hai, par individual shots kahin bhi.

Target: O

Shots: × ← one far left

× ← one far right

× ← one far up

High Variance: All over the place, unpredictable

Mathematical Decomposition

# Bias² + Variance Calculation

from sklearn.linear_model import LinearRegression

from sklearn.tree import DecisionTreeRegressor

import numpy as np

def bias_variance_decompose(model, X_train_sets, y_train_sets, X_test, y_test_true):

"""

Multiple training sets par model train karke bias aur variance estimate karo

"""

predictions = []

for X_tr, y_tr in zip(X_train_sets, y_train_sets):

model_copy = type(model)(**model.get_params())

model_copy.fit(X_tr, y_tr)

predictions.append(model_copy.predict(X_test))

predictions = np.array(predictions) # Shape: (n_models, n_test)

# Bias: (mean prediction - true value)²

mean_pred = predictions.mean(axis=0)

bias_squared = np.mean((mean_pred - y_test_true) ** 2)

# Variance: average spread of predictions

variance = np.mean(predictions.var(axis=0))

return bias_squared, variance

# Different complexity levels

X_test = np.linspace(-3, 3, 50).reshape(-1, 1)

y_true = true_function(X_test).ravel()

results = {}

for max_depth in [1, 2, 4, 6, 8, None]:

X_train_sets = []

y_train_sets = []

for _ in range(50): # 50 different training sets

X = np.random.uniform(-3, 3, 30).reshape(-1, 1)

y = true_function(X).ravel() + np.random.normal(0, 2, 30)

X_train_sets.append(X)

y_train_sets.append(y)

model = DecisionTreeRegressor(max_depth=max_depth, random_state=42)

bias2, var = bias_variance_decompose(model, X_train_sets, y_train_sets, X_test, y_true)

depth_label = str(max_depth) if max_depth else "None (max)"

results[depth_label] = {"bias²": round(bias2, 2), "variance": round(var, 2),

"total": round(bias2 + var, 2)}

print(f"Depth {depth_label:8}: Bias²={bias2:.2f}, Variance={var:.2f}, Total={bias2+var:.2f}")

# Output typically shows:

# Depth 1 : Bias²=9.12, Variance=0.45, Total=9.57 (High Bias)

# Depth 4 : Bias²=1.23, Variance=1.89, Total=3.12 (Sweet Spot!)

# Depth None : Bias²=0.12, Variance=8.94, Total=9.06 (High Variance)

The Tradeoff: Graph

import matplotlib.pyplot as plt

import numpy as np

# Illustrative curves (real values depend on problem)

complexity = np.linspace(0.1, 10, 100)

# As complexity increases:

bias_squared = 8 * np.exp(-0.6 * complexity) # Bias decreases

variance = 0.3 * np.exp(0.5 * complexity) # Variance increases

total_error = bias_squared + variance + 1.5 # +1.5 = irreducible noise

optimal_idx = np.argmin(total_error)

plt.figure(figsize=(10, 6))

plt.plot(complexity, bias_squared, 'b-', linewidth=2.5, label='Bias²')

plt.plot(complexity, variance, 'r-', linewidth=2.5, label='Variance')

plt.plot(complexity, total_error, 'k-', linewidth=3, label='Total Error = Bias²+Var+Noise')

plt.axhline(y=1.5, color='gray', linestyle='--', alpha=0.7, label='Irreducible Noise')

plt.axvline(x=complexity[optimal_idx], color='green', linestyle='--', linewidth=2)

plt.annotate('🎯 Sweet Spot\n(Optimal Complexity)',

xy=(complexity[optimal_idx], total_error[optimal_idx]),

xytext=(complexity[optimal_idx]+1.5, total_error[optimal_idx]+2),

arrowprops=dict(arrowstyle='->', color='green', lw=2),

fontsize=11, color='green', fontweight='bold')

plt.xlabel('Model Complexity', fontsize=13)

plt.ylabel('Error', fontsize=13)

plt.title('Bias-Variance Tradeoff', fontsize=15, fontweight='bold')

plt.legend(fontsize=11)

plt.grid(True, alpha=0.3)

plt.tight_layout()

plt.savefig('bias_variance_curve.png', dpi=150, bbox_inches='tight')

plt.show()

Key insight: Dono minimize karna mathematically impossible hai simultaneously — ek badhta hai toh doosra girta hai. Sweet spot dhoondhna hi art of ML hai.

Summary Table: Bias vs Variance vs Overfitting/Underfitting

| Concept | What It Is | Symptom | Solution |

|---|---|---|---|

| High Bias | Model too simple | Both errors high | Complex model, more features |

| High Variance | Model too sensitive | Train good, Test bad | More data, regularization |

| Underfitting | High Bias manifestation | All accuracy low | Increase complexity |

| Overfitting | High Variance manifestation | Train-Test gap | Regularization, more data |

Ensemble Methods: Bias-Variance Dono Handle Karo

Smart algorithms mathematically bias-variance problem tackle karte hain:

Bagging (Bootstrap Aggregating) — Variance Reduce Karta Hai

from sklearn.ensemble import BaggingRegressor, RandomForestRegressor

from sklearn.tree import DecisionTreeRegressor

# High variance tree + bagging = low variance ensemble

base_tree = DecisionTreeRegressor(max_depth=None) # Intentionally deep (high variance)

# Bagging: Multiple trees on different data subsets → average predictions

bagging = BaggingRegressor(base_tree, n_estimators=100, random_state=42)

# Random Forest = Bagging + Random Feature Selection (even better)

rf = RandomForestRegressor(n_estimators=100, random_state=42)

# Result: Individual trees have high variance, but ensemble average is stable!

Kyon works: Individual trees ki errors cancel out jab average lete hain (uncorrelated errors).

Boosting — Bias Reduce Karta Hai

from sklearn.ensemble import GradientBoostingRegressor

import xgboost as xgb

# Boosting: Weak learners (high bias) sequentially combine

# Each learner previous ki errors fix karta hai

gbm = GradientBoostingRegressor(

n_estimators=200,

max_depth=3, # Intentionally shallow (high bias individually)

learning_rate=0.1

)

# XGBoost (more optimized)

xgb_model = xgb.XGBRegressor(

n_estimators=200,

max_depth=4,

learning_rate=0.05,

subsample=0.8 # Random sampling (variance control bhi)

)

Comparison:

| Method | Reduces | How | Example |

|---|---|---|---|

| Bagging | Variance | Average of parallel trees | Random Forest |

| Boosting | Bias | Sequential error correction | XGBoost, LightGBM |

| Stacking | Both | Meta-learning | Competition winners |

Practical Strategy: Kaise Decide Karein?

from sklearn.model_selection import cross_val_score

def diagnose_model(model, X, y, cv=5):

"""Model ki bias-variance situation diagnose karo"""

train_scores = cross_val_score(model, X, y, cv=cv, scoring='neg_mean_squared_error')

# Train error (approximate via CV on full data)

model.fit(X, y)

train_err = -((model.predict(X) - y) ** 2).mean()

val_err = train_scores.mean()

print(f"Train MSE (approx): {abs(train_err):.4f}")

print(f"Validation MSE (CV): {abs(val_err):.4f}")

print(f"Generalization Gap: {abs(val_err - train_err):.4f}")

if abs(train_err) > 0.5: # Threshold depends on problem

print("→ HIGH BIAS likely — model too simple")

print(" Fix: More complexity, more features, non-linear model")

elif abs(val_err - train_err) > 0.3:

print("→ HIGH VARIANCE likely — model overfitting")

print(" Fix: More data, regularization, ensemble methods")

else:

print("→ BALANCED — good fit! Fine-tune further if needed")

# Usage

from sklearn.tree import DecisionTreeRegressor

diagnose_model(DecisionTreeRegressor(max_depth=3), X, y)

FAQs

1. Bias aur Overfitting ek hi cheez hain? Nahi. Bias = High → Underfitting. Variance = High → Overfitting. Overfitting/Underfitting behaviors hain, Bias/Variance unke causes.

2. Kya dono ek saath high ho sakte hain? Technically possible, par rare. High Bias + High Variance = model bahut kharab hai. Isko fix karna is post ka point hai.

3. Irreducible noise ko kaise minimize karein? Technically nahi ho sakta — data ka natural randomness hai. Lekin better data collection (noise-free sensors, cleaner labels) se total noise reduce hoti hai.

4. Random Forest aur XGBoost mein kaunsa better hai? RF: low variance, interpretable, works out-of-box. XGBoost: often better accuracy, needs tuning. Tabular data competitions mein XGBoost mostly wins.

5. Neural network mein Bias-Variance kaise apply hoti hai? Same principle. Shallow/small network = high bias. Deep/wide network without regularization = high variance. BatchNorm + Dropout = variance control. Adam optimizer = faster convergence (bias kam hoti hai faster).

Bias-Variance Tradeoff ka yeh concept ML ke core mein hai — clear hua? Koi specific model par diagnose karna ho toh batao! 📊

Tarun ke baare mein: Tarun ek AI educator hain jo ML theory ko practical Python examples ke saath explain karte hain. AI-Gyani par har mathematical concept hands-on code ke saath aata hai.