LLMOps Kya Hai? Jab LLM Real World mein Jaata Hai!

ChatGPT ek amazing tool hai, par kya aap jaante ho ki ek company ko ChatGPT jaisa product banana aur chalaana kitna complex hota hai?

Prompt likhna easy hai. Par ek production-grade LLM application banana jo:

- Hazaron users handle kare

- Consistent aur safe responses de

- Cost-effectively run kare

- Improve karta rahe time ke saath

...yahi hai LLMOps ka kaam!

LLMOps Kya Hai?

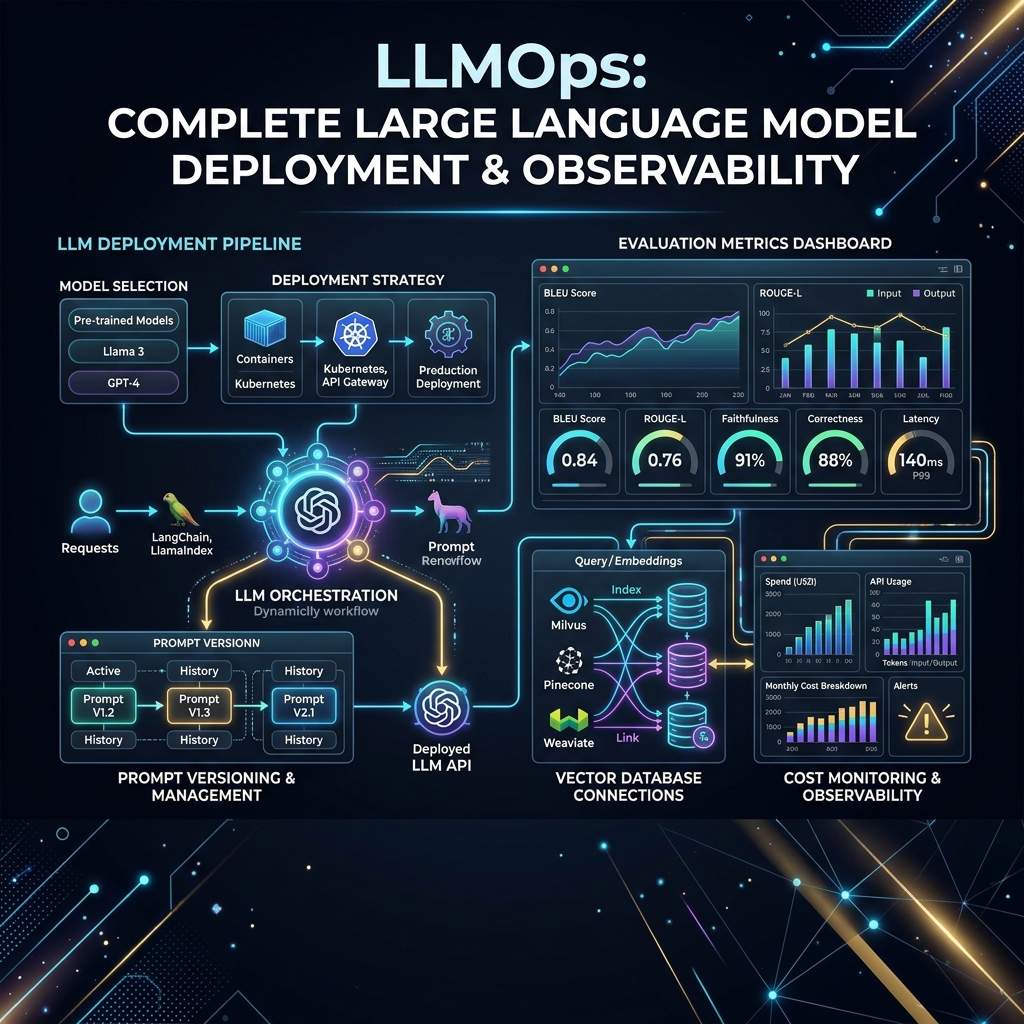

LLMOps (Large Language Model Operations) MLOps ka specialized version hai jo specifically Large Language Models ko develop, deploy, monitor, aur improve karne ke liye practices aur tools ko cover karta hai.

MLOps traditional ML ke liye tha. LLMs ke saath kuch nayi challenges aati hain:

- Models bahut bade hain (billions of parameters)

- Training bahut expensive hai

- Hallucination aur safety issues

- Prompt engineering ka extra dimension

- Context window management

LLMOps vs MLOps — Key Differences

| Aspect | Traditional MLOps | LLMOps |

|---|---|---|

| Model Size | MB se GB | GB se TB |

| Training Frequency | Baar baar retrain | Rarely retrain (mostly fine-tune) |

| Main Knob | Hyperparameters | Prompt engineering |

| Evaluation | Numeric metrics | Human feedback + LLM judges |

| Key Challenge | Data drift | Hallucination, safety, cost |

| Versioning | Model + Data | Model + Prompt + Context |

LLMOps ka Full Stack

1. Prompt Management

Prompts ka version control karna bilkul code ki tarah zaroori hai.

# PromptLayer ya LangSmith se prompt track karo

from langsmith import Client

client = Client()

# Prompt template register karo

prompt_template = client.create_prompt(

name="customer-support-v2",

template="""

Tum ek helpful customer support agent ho for {company_name}.

User ka issue: {user_query}

Guidelines:

- Hamesha polite raho

- Agar sure nahi ho toh honestly batao

- Escalation ke liye yeh steps follow karo: {escalation_steps}

Response:

"""

)

Prompt Versioning Tools:

- LangSmith (LangChain ka tool)

- PromptLayer

- Weights & Biases Prompts

2. LLM Evaluation — Automatic Testing

LLM output evaluate karna manual se automatic karna:

from langchain.evaluation import load_evaluator

from langchain_openai import ChatOpenAI

# LLM-as-judge approach

evaluator = load_evaluator(

"criteria",

criteria="helpfulness",

llm=ChatOpenAI(model="gpt-4o")

)

eval_result = evaluator.evaluate_strings(

prediction="Yeh ek bahut accha question hai...",

input="Python mein loops kaise likhte hain?",

)

print(eval_result["score"]) # 0-1 score

print(eval_result["reasoning"]) # Kyun ye score mila

Kya evaluate karein:

- Correctness: Kya answer sahi hai?

- Helpfulness: Kya answer useful hai?

- Safety: Koi harmful content toh nahi?

- Groundedness: (RAG mein) Kya answer documents se supported hai?

3. Observability — Har Interaction Track Karo

from langsmith import traceable

from langchain_openai import ChatOpenAI

@traceable # Har call automatically log hogi

def answer_question(question: str) -> str:

llm = ChatOpenAI(model="gpt-4o-mini")

response = llm.invoke(question)

return response.content

# Ab har call LangSmith dashboard mein dikhi

result = answer_question("AI kya hai?")

Track karo:

- Latency (response time kitna laga)

- Token usage (cost calculate karo)

- Error rates

- User feedback scores

4. Cost Management

LLM APIs expensive ho sakte hain! Cost control strategies:

# Token counting before API call

import tiktoken

def count_tokens(text: str, model: str = "gpt-4o") -> int:

enc = tiktoken.encoding_for_model(model)

return len(enc.encode(text))

def estimate_cost(prompt: str, model: str = "gpt-4o-mini") -> float:

tokens = count_tokens(prompt)

# gpt-4o-mini: $0.15 per 1M tokens

return (tokens / 1_000_000) * 0.15

# Caching — same question, same answer

from functools import lru_cache

@lru_cache(maxsize=1000)

def cached_llm_call(question: str) -> str:

return expensive_llm_call(question)

Cost Optimization Tips:

- Smaller model use karo jab possible (mini vs full)

- Semantic caching implement karo

- Prompt compression karo (unnecessary text hatao)

- Batch processing use karo

- Streaming use karo (perceived latency kam ho)

5. Safety & Guardrails

from guardrails import Guard

from guardrails.hub import ToxicLanguage

# Output safety check

guard = Guard().use(ToxicLanguage, threshold=0.5, on_fail="refrain")

response = guard(

llm_api=openai.chat.completions.create,

model="gpt-4o-mini",

messages=[{"role": "user", "content": user_input}]

)

Safety Checks:

- Toxic/harmful language detection

- PII (Personal Information) removal

- Hallucination detection

- Prompt injection prevention

6. Fine-tuning vs RAG vs Prompting

When to use what:

| Approach | Kab Use Karein | Cost |

|---|---|---|

| Prompt Engineering | Domain knowledge sirf context mein chahiye | Lowest |

| RAG | Large knowledge base, frequently updated data | Medium |

| Fine-tuning | Specific style, format, or domain adaptation | Highest |

7. A/B Testing Prompts

import random

def get_response(query: str) -> str:

# 50/50 split between two prompt versions

if random.random() < 0.5:

prompt = f"[Version A] Answer this helpfully: {query}"

version = "A"

else:

prompt = f"[Version B] You are an expert. {query}"

version = "B"

response = llm.invoke(prompt)

# Track which version performed better

log_experiment(version=version, query=query, response=response)

return response

LLMOps Tools Ecosystem

| Category | Tools |

|---|---|

| LLM Frameworks | LangChain, LlamaIndex, Haystack |

| Observability | LangSmith, Helicone, Braintrust |

| Prompt Management | PromptLayer, Humanloop |

| Evaluation | RAGAS, DeepEval, TruLens |

| Safety/Guardrails | Guardrails AI, LlamaGuard |

| Serving | vLLM, TGI, Ollama |

| Vector DBs | Pinecone, Weaviate, ChromaDB |

LLMOps Engineer ka Career

Skills needed:

- Python programming

- LLM APIs (OpenAI, Anthropic, Google)

- LangChain/LlamaIndex

- Cloud platforms

- MLOps basics (Docker, CI/CD)

Salary Range (India 2026):

- Junior: 12-20 LPA

- Mid: 20-40 LPA

- Senior: 40-80 LPA

FAQs

1. Kya MLOps jaanna zaroori hai LLMOps seekhne ke liye? Helpful hai par strictly zaroori nahi. LLMOps se directly bhi shuru kar sakte hain.

2. LangChain aur LlamaIndex mein kya farq hai? LangChain general-purpose LLM application framework hai. LlamaIndex RAG applications ke liye specifically optimized hai.

3. LLMOps kab zaroori hota hai? Jab aap prototype se production mein jaate ho — multiple users, reliability requirements, cost tracking sab zaroori ho jaata hai.

4. Kya open source tools se pura LLMOps stack ban sakta hai? Haan! MLflow + LangChain + ChromaDB + Grafana — pura open source stack available hai.

5. Vibe coding vs LLMOps mein kya relate karta hai? Vibe coding prototype ke liye hai. LLMOps production ke liye. Dono ka apna time aur place hai!

LLMOps mein sabse interesting kya laga? Share karein aur discuss karein! 🤖