AI ki sabse badi khoobi ye hai ki wo apni galtiyon se seekhta hai. Lekin computer koi insaan nahi hai jise aap daant kar bol sakein "Beta, agli baar sahi karna". Computer ko math ki bhasha mein batana padta hai ki usne kitni galti ki aur use kis direction mein sudharna hai. Is "Sudharne ki process" ka naam hi Calculus hai.

1. Derivative: Badlav ki Raftar

Derivative ka matlab hota hai Rate of Change.

- Example: Agar aap accelerator dabate hain, toh speed kitni fast badal rahi hai? Ye derivative hai.

- AI mein use: AI model jab prediction karta hai, toh usmein ek "Loss" (Galti) hota hai. Derivative humein batata hai ki agar hum model ke weights ko 1% badlein, toh Error kitna kam hoga.

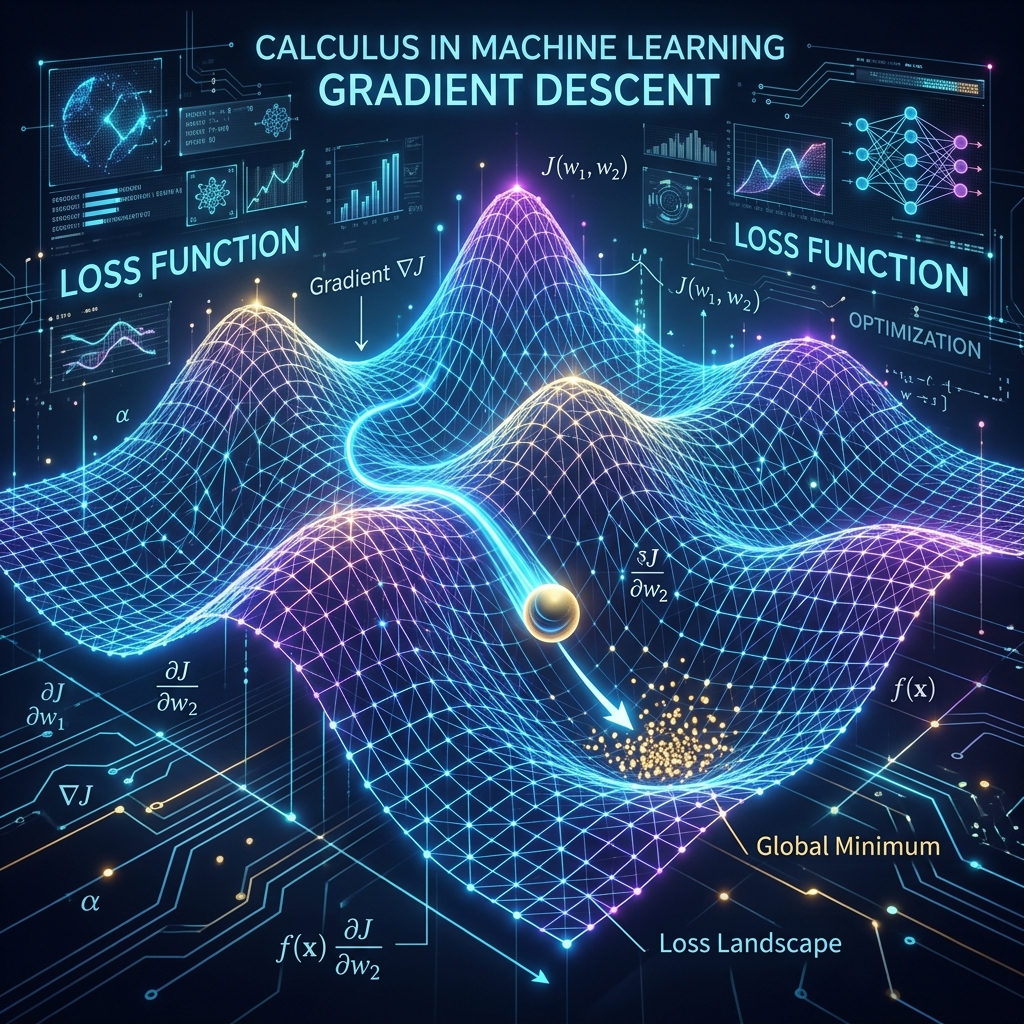

2. Gradient Descent: Pahaad se neeche utarna

Imagine kijiye aap ek pahaad (High Error) par khade hain aur aapko neeche valley (Low Error) mein jana hai, par wahan bahut dhundh (fog) hai.

- Aap apne pairon se dhalan (slope/gradient) check karenge.

- Jis taraf slope sabse zyada neeche ja rahi hai, wahan ek kadam badhayenge.

- Phir slope check karenge... aur tab tak chalenge jab tak sabse neeche na pahunch jayein. Is logic ko hi hum Optimization kehte hain.

3. Chain Rule: Backpropagation ka Engine

Deep Learning mein kai layers hoti hain.

- Humein Error (Layer 10) se piche Layer 1 tak jana hai aur har weight ko theek karna hai.

- Chain Rule humein batata hai ki in layers ke badlav ko multiply karke piche kaise pahuncha jaye. Bina chain rule ke Deep Learning namumkin hoti.

4. Learning Rate: Kadam ka Size

- High Learning Rate: Aap pahaad se kood jayenge aur seedha doosre pahaad par pahunch jayenge. Valley kabhi nahi milegi (Divergence).

- Low Learning Rate: Aapko valley tak pahunchne mein 10 saal lag jayenge. Sahi learning rate chunna hi ek AI Engineer ka sabse bada challenge hota hai.

5. Summary Table: Calculus Concepts

| Term | Simple Meaning | AI Role |

|---|---|---|

| Derivative | Slope / Dhalan | Finding direction of improvement |

| Gradient | Multi-variable slope | Updating multiple weights at once |

| Chain Rule | Step-by-step impact | Backpropagation engine |

| Minima | Lowest Point | Achieving zero error |

FAQs

1. Kya mujhe differentiation ke formulas ratne honge? Nahi! PyTorch aur TensorFlow mein "Autograd" hota hai jo derivatives apne aap nikaal leta hai. Aapko sirf "Logic" samajhna hai.

2. "Partial Derivative" kya hai? Jab hamare paas 100 variables hon, toh partial derivative humein sirf 1 variable ka asar batata hai baaki sab ko constant rakh kar.

3. "Jacobian Matrix" kya hoti hai? Ye derivatives ka ek table hota hai jo humein batata hai ki multiple inputs ka multiple outputs par kya asar pad raha hai. Ye advanced research mein use hota hai.

4. Calculus AI ke training mein kab use hota hai? Har "Batch" ke baad. Model prediction karta hai (Forward), phir calculus se galti nikaali jati hai aur weights update hote hain (Backward).

Calculus ke bina AI sirf ek "Static" code hai. Calculus hi wo energy hai jo use har kadam par "Better" banati hai! 📉

Tarun ke baare mein: Tarun mathematical optimization aur gradient-based learning dynamics ke specialist hain. AI-Gyani par har derivative logic-driven hai.