Ek purana sawaal hai: "Machine learning model training ke waqt actually hota kya hai?"

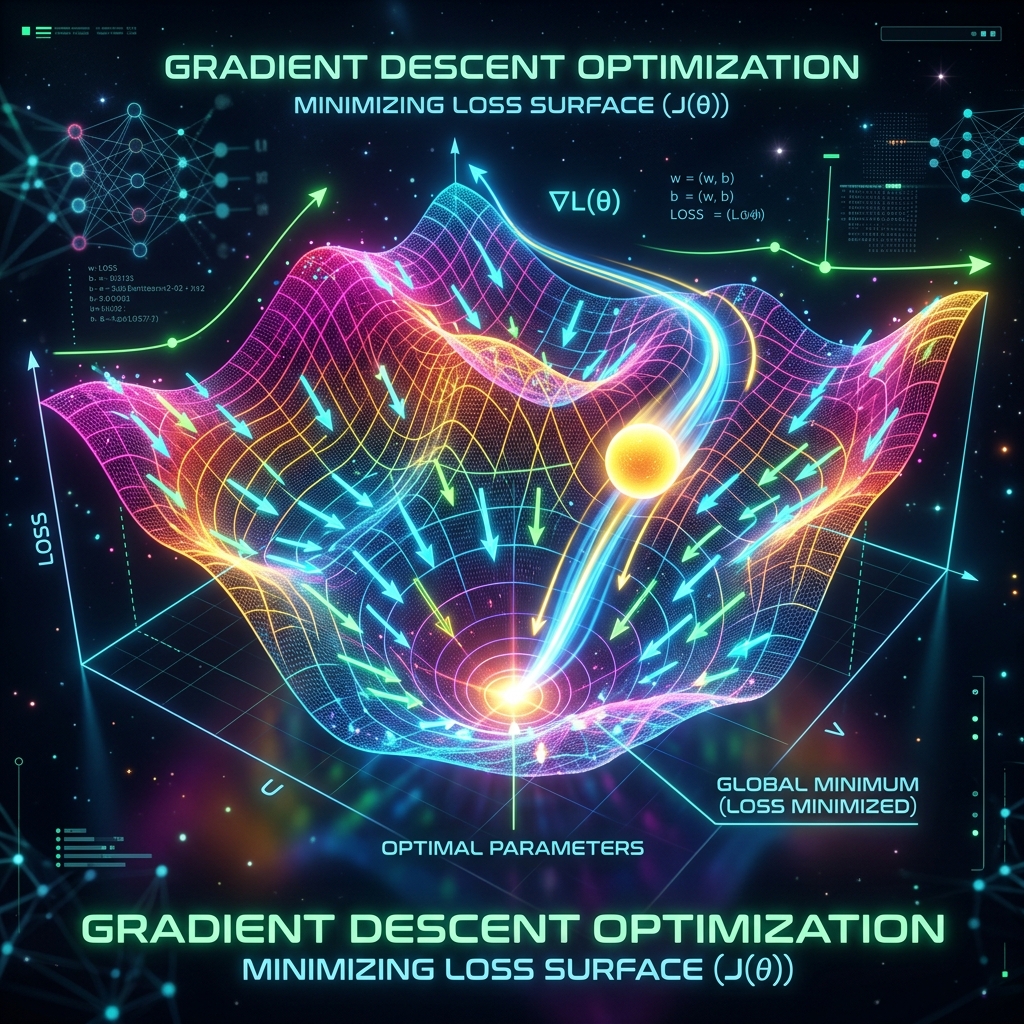

Insaan jab seekhta hai, toh wo dimag lagata hai. Lekin computer jab seekhta hai, toh wo sirf ek math operation karta hai jise hum Optimization kehte hain. Iska goal simple hai — Loss (Galti) ko kam se kam karna. Is process ka sabse bada hero hai Gradient Descent.

1. Gradient Kya Hai? (The Compass)

Gradient ek mathematical "Arrow" hai jo batata hai ki pahaad par sabse unchi jagah (Max Loss) kahan hai. Humein pahaad se neeche utarna hai, isliye hum hamesha Gradient ke Ulte direction mein kadam badhate hain.

- Zero Gradient: Iska matlab hai aap ya toh pahaad ki choti par hain, ya valley (gadda) ke sabse neeche point par.

2. Advanced Optimizers: Adam, Momentum aur SGD

Normal Gradient Descent thoda "Bholu" hota hai — wo bas seedha neeche utarta hai. Par real-world problems mein humein smart algorithms chahiye.

A. Momentum (Bhaari Gend)

Imagine kijiye ek heavy ball pahaad se ludhak rahi hai. Wo raste mein aane wale chhote gadon (Local Minima) mein nahi phasti, balki apni raftaar se unhe paar karke asli valley (Global Minima) tak pahunchti hai.

B. Adam (Adaptive Moment Estimation)

Ye aaj ke zamane ka sabse popular optimizer hai. Ye har variable ke liye "Learning Rate" ko apne aap adjust karta hai. Agar koi weight fast badal raha hai, toh ye use control karta hai, aur jo slow hai use fast karta hai.

3. Vanishing Gradients: Jab AI seekhna band kar de

Deep Learning mein jab layers bahut zyada ho jati hain, toh piche wali layers tak pahunchte-pahunchte Gradient itna chhota ho jata hai ki weights badalna band ho jate hain. Ise Vanishing Gradient kehte hain. Solution: Isse bachne ke liye hum ReLU activation functions aur Batch Normalization use karte hain.

4. Saddle Points: AI ka sabse bada dushman

Kabhi-kabhi hum ek aisi jagah phans jate hain jahan ek taraf se toh slope neeche ja rahi hai, par doosri taraf se flat hai. Ise Saddle Point kehte hain (jaise ghode ki kaathi). Yahan Gradient zero ho jata hai aur model sochta hai ki kaam ho gaya, jabki asli valley abhi door hai.

5. Summary Table: Optimization Toolkit

| Tool | Benefit | Best Use Case |

|---|---|---|

| SGD | Simple aur Fast | Chhote datasets, basics |

| Momentum | Speed badhata hai | Jab data mein bahut noise ho |

| Adam | Smart aur Reliable | Standard choice for 99% AI tasks |

| Learning Rate | Step size | Training ki speed control karna |

FAQs

1. Optimizer aur Loss Function mein kya fark hai? Loss function batata hai "Galti kitni hai". Optimizer us galti ko dekh kar batata hai "Ab weights ko kaise sudharein".

2. Kya Adam hamesha best hota hai? Zyadatar haan, par research projects mein kabhi-kabhi SGD better results deta hai agar use dhyan se tune kiya jaye.

3. Global Minima milna kitna zaroori hai? AI mein humein hamesha "Perfect" (Global) point nahi milta. Agar hum "Kaafi Accha" (Local) point bhi dhoond lein jahan loss kam ho, toh model acche se kaam karta hai.

4. Training "Diverge" hone ka kya matlab hai? Agar aapka Learning Rate bahut zyada hai, toh model galti kam karne ke bajaye galti badhane lagta hai. Ise "Exploding Gradient" kehte hain.

Optimization hi wo jaadu hai jo ek random code ko "Intelligence" mein badalta hai! 📉

Tarun ke baare mein: Tarun AI models ko optimize karne aur unhein fast banane ke master hain. AI-Gyani par har step efficient hai.