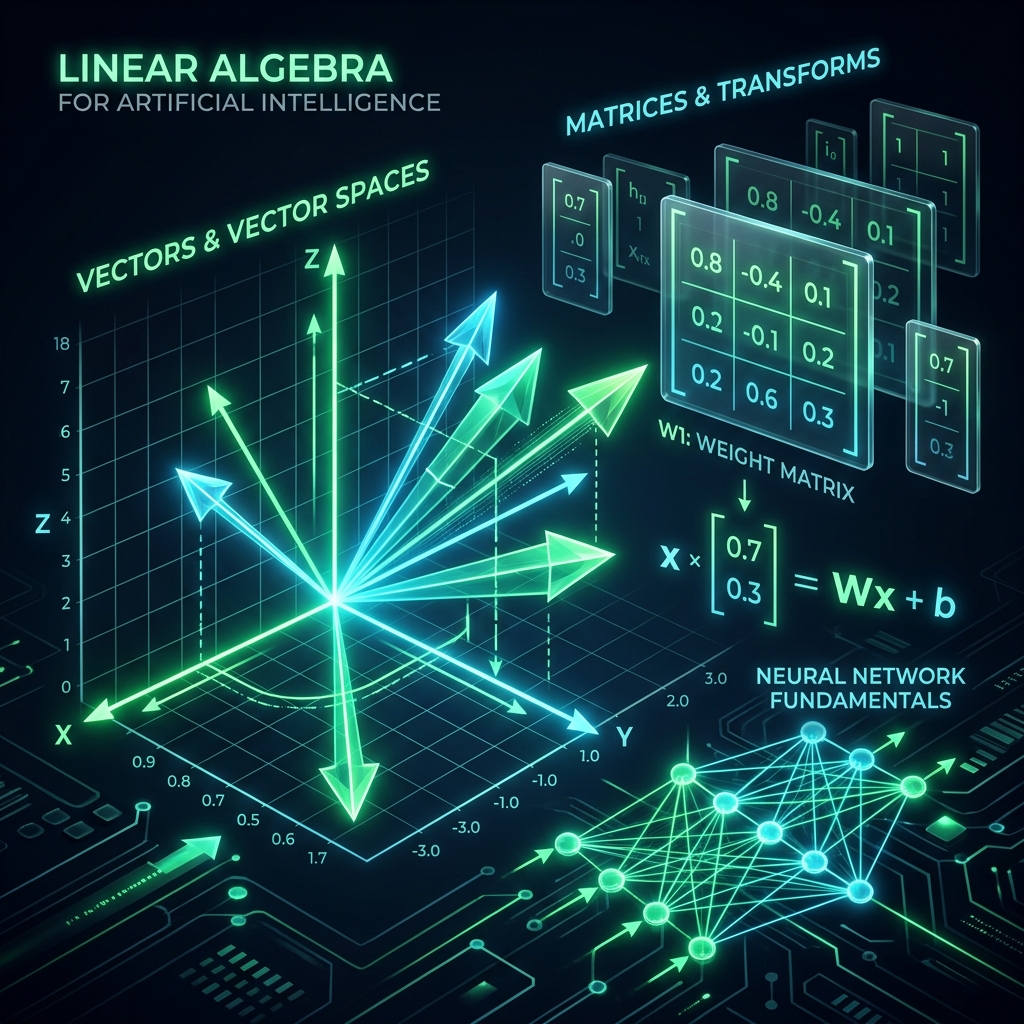

AI computers ko bhasha ya images samajh nahi aati, unhein sirf Numbers samajh aate hain. Agar aap ChatGPT se puchte hain "Hello", toh uske liye wo [0.12, -0.45, 0.67...] jaisa ek lamba vector hai. In numbers ko manage karne ki math ko hi hum Linear Algebra kehte hain. Bina iske, na toh Google Search kaam karega, na hi Face ID.

1. Data Hierarchy: Scalar se Tensor tak

- Scalar (0D): Sirf ek number. Jaise "Ghar ki age: 10 saal".

- Vector (1D): Ek list. Jaise "Ghar ke features: [3 rooms, 1500 sqft, 10 years]".

- Matrix (2D): Ek table. Jab hum hazaron gharon ka data rows aur columns mein rakhte hain.

- Tensor (3D+): Color images tensors hote hain kyonki unmein R, G, B ki 3 alag-alag matrices hoti hain.

2. Matrix Multiplication: AI ka Engine

Neural Networks andar hi andar sirf Matrix Math karte hain.

- Jab ek layer se doosri layer par data jata hai, toh wo Weights Matrix se multiply hota hai.

- Dot Product: Ye batata hai ki do vectors aapas mein kitne "Miltay-jultay" hain. Similarity search isi par base hai.

3. Eigenvalues aur Eigenvectors: Data ka Compass

Ye sunne mein bhari lagte hain par concept simple hai.

- Eigenvector: Wo direction jahan data sabse zyada "Stretch" hota hai bina apni direction badle.

- Eigenvalue: Wo "Scale" ya "Takat" jo batati hai ki stretching kitni ho rahi hai. AI Use: PCA (Dimensionality Reduction) mein hum eigenvectors nikaalte hain taaki pata chale ki 100 features mein se kaunse 2 sabse important hain.

4. SVD (Singular Value Decomposition)

SVD ek aisi technique hai jo ek badi matrix ko teen chhoti matrices mein tod deti hai.

- Real use: Netflix recommendation mein!

- Agar 1 crore users hain aur 1 lakh movies, toh Matrix bahut badi ho jayegi. SVD use karke hum ise "Compress" karte hain taaki patterns (jaise user ko Action movies pasand hain) nikal sakein.

5. Summary Table: Linear Algebra in Action

| Concept | AI Application | Why? |

|---|---|---|

| Vectors | Word Embeddings | Meaning representation |

| Matrices | Grayscale Images | Pixel grids (0-255) |

| Dot Product | Attention Mechanism | Finding relevant words |

| Tensors | Color Video / Images | Multidimensional data grids |

FAQs

1. Kya mujhe haath se matrix math karna hoga? Nahi, Python ki NumPy library ye minto mein kar deti hai. Aapko sirf "Intuition" chahiye ki piche kya ho raha hai.

2. Tensor aur Matrix mein asli fark? Matrix sirf 2D hai. Tensor koi bhi N-dimensional grid ho sakta hai. AI training (TensorFlow) poori tarah tensors par hi tika hai.

3. "Inverse Matrix" kyon zaroori hai? Linear Regression mein equations solve karne ke liye humein matrix inverse nikaalna padta hai. Ise "Math ka undo button" samjhein.

4. GPU kyon zaroori hai Linear Algebra ke liye? Kyonki GPU ek saath hazaron Matrix Multiplications kar sakta hai (Parallel processing), jo CPU nahi kar pata.

Linear Algebra AI ki bhasha hai. Ise samajh liya toh aap code nahi, balki "Data" likhna seekh jayenge! 🔢

Tarun ke baare mein: Tarun mathematical structures ko intuitive visualizations mein badalne ke expert hain. AI-Gyani par har formula ka ek logical maqsad hai.