ChatGPT se kabhi pucha hai: "Kal market mein kya hua?" — Wo kahega uski knowledge 2023 ya 2024 tak hi hai. AI ki memory "Stale" (Purani) hoti hai. Iska solution hai RAG (Retrieval Augmented Generation). RAG AI ko "Zameen" par lata hai aur use aapki private files aur latest news se jhodta hai.

1. The Open Book Analogy

Imagine kijiye ek student hai (AI).

- Bina RAG (Closed Book): Student ne jo bachpan mein padha tha, usi se jawab de raha hai. Wo bhool sakta hai ya jhoot bol sakta hai.

- RAG ke saath (Open Book): Student ko exam mein ek "Kitaab" (Aapka Data) de di jati hai. Wo pehle kitaab padhta hai, sahi page dhoondhta hai, aur phir accurate jawab likhta hai.

2. RAG Pipeline: How it works?

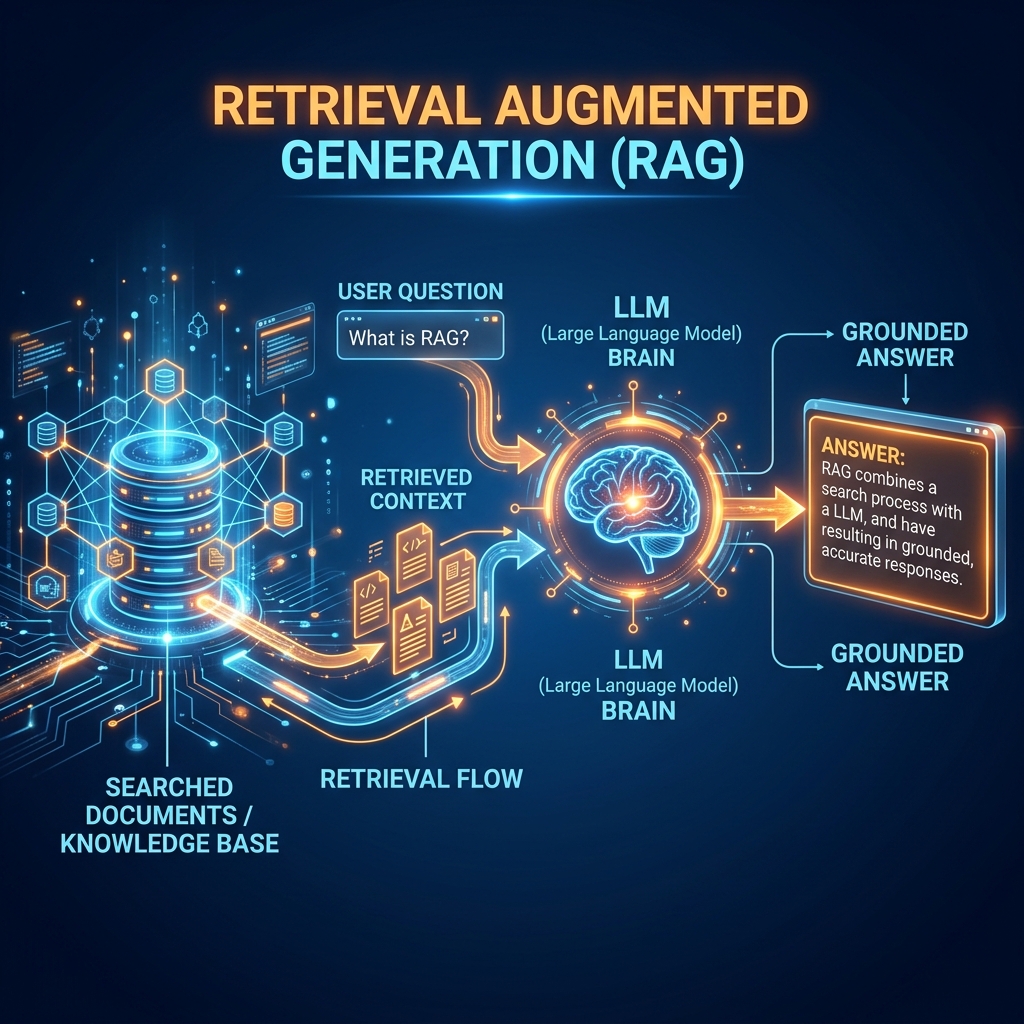

RAG 3 steps mein kaam karta hai:

- Retrieval: Jab aap sawaal puchte hain, toh AI ek Vector Database mein se us sawaal se milte-julte "Chunks" (Paragraphs) dhoondhta hai.

- Augmentation: Un paragraphs ko aapke original sawaal ke saath jhod (Augment) diya jata hai.

- Generation: Ab LLM (GPT-4) ke paas "Fact" aur "Question" dono hain. Wo bina jhoot bole perfect jawab likhta hai.

3. Solving the Hallucination Problem

LLM jhoot (Hallucination) tab bolta hai jab uske paas facts nahi hote par use jawab dena hi hota hai.

- RAG model ko "Grounded" rakhta hai.

- Agar document mein likha hai "Company profit is 10%", toh model kabhi 15% nahi kahega.

- Professional AI apps (Banking, Medical) hamesha RAG use karte hain accuracy ke liye.

4. Vector DB: AI ki Extra Memory

RAG ke liye humein Vector Databases (jaise Pinecone ya ChromaDB) chahiye hote hain.

- Ye SQL databases ki tarah nahi hote.

- Ye data ko "Embeddings" (Vectors) mein store karte hain taaki AI minto mein millions of pages mein se sahi context dhoondh sake.

5. Summary Table: RAG vs Fine-Tuning

| Feature | RAG | Fine-Tuning |

|---|---|---|

| Updates | Real-time (Just add file) | Hard (Need to re-train) |

| Hallucinations | Very Low | Still High |

| Cost | Low (Cheap) | High (GPU expense) |

| Style/Tone | Fixed by Base Model | Customizable |

FAQs

1. "Chunking" kya hai? Aap poori 500-page ki book AI ko nahi bhej sakte (Context limit). Isliye hum book ko chote-chote tukdon (500-1000 words) mein baant-te hain. Ise hi "Chunking" kehte hain.

2. Kya RAG ke liye internet zaroori hai? Agar aapka data local files mein hai, toh internet ki zaroorat nahi hai. Aap local models (Ollama) ke saath bhi RAG setup kar sakte hain.

3. "Hybrid Search" kya hota hai? Jab hum Keyword Match aur Semantic Match dono ko mix karte hain taaki best results milein.

4. 2026 mein RAG ka future? Ab hum Agentic RAG use kar rahe hain, jahan AI khud decide karta hai ki use kab search karna hai aur kab apne dimaag se jawab dena hai.

RAG AI ko "Fact-Checker" banata hai. Ise use karke aap AI ko bharosemand aur intelligent bana sakte hain! 📚

Tarun ke baare mein: Tarun retrieval-grounded architectures aur semantic data retrieval ke specialist hain. AI-Gyani par har jawab factual aur verified hai.