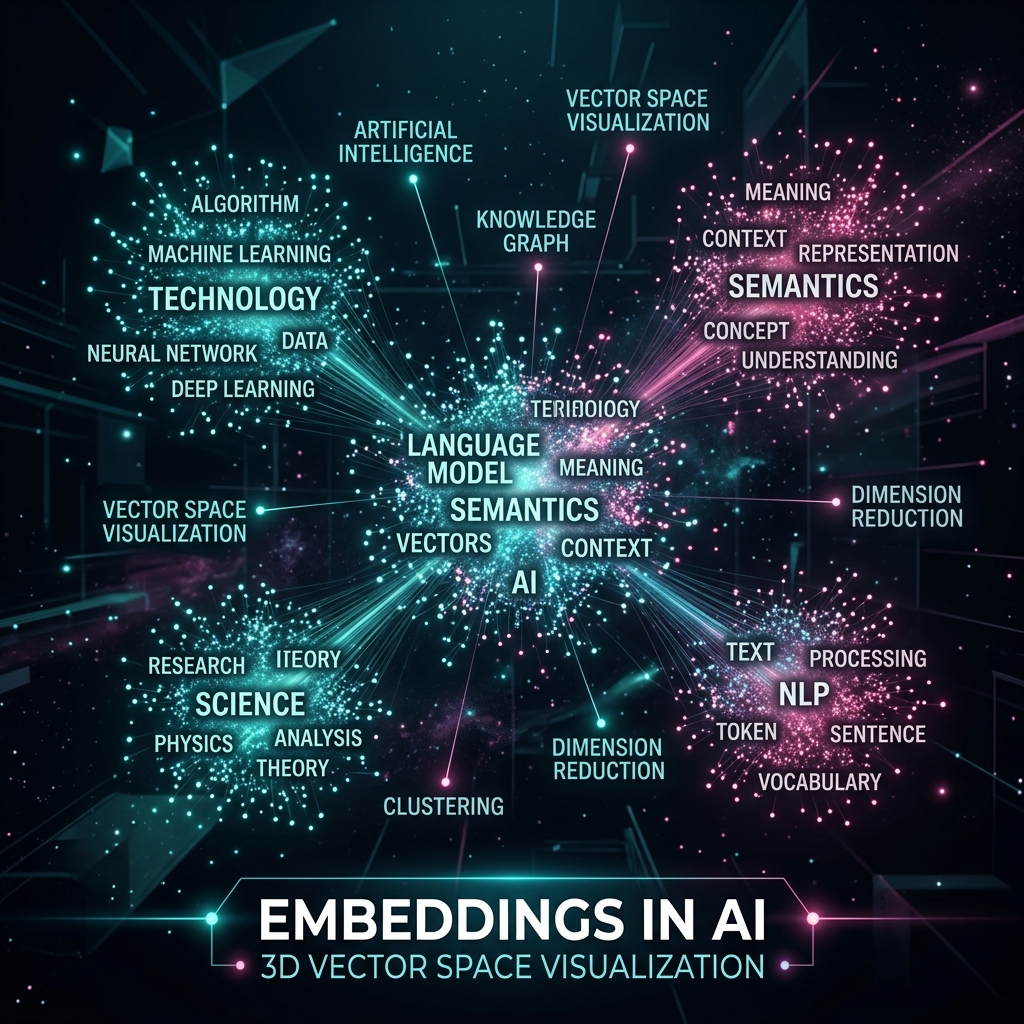

Computer ko "Biryani" nahi pata, use sirf 0 aur 1 pata hain. Lekin aaj ka AI "Biryani" aur "Pulao" ke beech ka rishta samajhta hai. Ye sab possible hai Embeddings ki wajah se. Embeddings AI ka wo "Dimaagi Map" hai jahan shabdon ko numbers (Vectors) mein badal kar unka "Matlab" (Semantics) store kiya jata hai.

1. Vector: Information ka GPS Coordinate

Imagine kijiye ek bahut bada 3D kamra hai.

- "Mobile" ek kone mein hai.

- "Smartphone" bilkul uske pados mein hai.

- "Apple" (Fruit) bahut door doosre kone mein hai. Har word ki is room mein ek unique location hai jise hum Vector kehte hain. AI in coordinates ko use karke samajhta hai ki kaunsa word kiske kitne paas hai.

2. High-Dimensional Space: 1536 Dimensions ka Jadoo

Hamari duniya 3D hai (Height, Width, Depth). Par AI ki duniya 1536-Dimensional ho sakti hai.

- Har dimension ek "Feature" represent karti hai.

- Dimension 1: Kya ye khane ki cheez hai?

- Dimension 2: Kya ye electronic hai?

- Dimension 3: Kya ye gusse wala shabd hai? In hazaron features ko milakar AI kisi bhi word ka "Poora Context" samajh leta hai.

3. King - Man + Woman = Queen

Embeddings ki sabse famous baat hai unka "Math logic".

- Agar aap "King" ke vector se "Male" feature minus kar dein aur "Female" feature add kar dein, toh math ka result exactly "Queen" ke vector par ja kar rukega.

- Iska matlab hai ki AI ko shabdon ke beech ke logic (Genders, Tenses, Relationships) ka mathematical pata hai.

4. Semantic Search vs Keyword Search

- Keyword Search (Puranazama): Agar aapne "Cell Phone" search kiya aur DB mein "Mobile" likha hai, toh result nahi milega.

- Semantic Search (Embeddings): AI vectors ko compare karta hai. Use pata hai ki "Cell Phone" aur "Mobile" ka vector space same hai, isliye wo aapko sahi result dikhata hai. Ise hi Vector Search kehte hain.

5. Summary Table: Vector Space Logic

| Concept | Meaning | Importance |

|---|---|---|

| Embedding | Text to Numbers | Machine comprehension |

| Cosine Similarity | Angle between vectors | Finding similar meanings |

| Centroid | Cluster of similar words | Topic discovery |

| Vector DB | Warehouse for vectors | Scaling search across millions |

FAQs

1. "Cosine Similarity" kya hai? Ye wo formula hai jo do vectors ke beech ka "Angle" dhoondhta hai. Chota angle matlab words bahut similar hain. 90-degree angle matlab koi rishta nahi hai.

2. Embeddings kahan se aate hain?

Inhein "Embedding Models" (jaise OpenAI's text-embedding-3-small) generate karte hain. Aap text bhejte hain aur wo numbers ka ek dher (Array) wapas bhejte hain.

3. "Dense Vector" vs "Sparse Vector" mein kya fark hai? Dense vector (Embeddings) har word ko meaning deta hai. Sparse vector sirf ye dekhta hai ki word list mein hai ya nahi (Keyword matching). Dense vector AI ke liye best hai.

4. 2026 mein embeddings ka use? Ab hum Multimodal Embeddings use kar rahe hain, jahan image aur text ek hi vector space mein hote hain. Isliye aap "Yellow Dress" likh kar exact image search kar pate hain.

Embeddings AI ki "Universal Language" hain. Ye shabdon ko math mein badal kar machine ko insaan se jhodti hain! 🔢

Tarun ke baare mein: Tarun high-dimensional vector spaces aur latent semantic analysis ke specialist hain. AI-Gyani par har vector ka ek gehra maqsad hai.