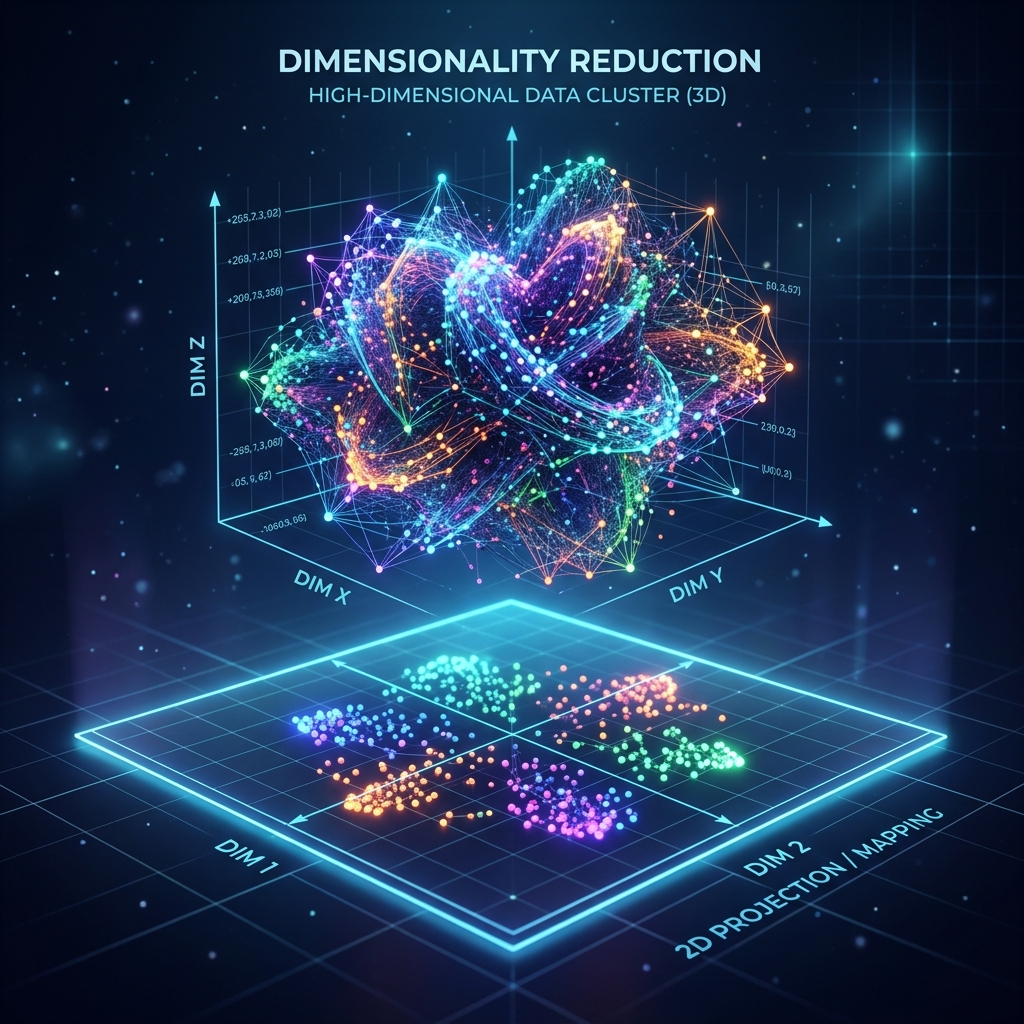

Sochiye aapke paas 100 features hain (Age, Salary, City, Education...). Model in sabko handle karte-karte thak jayega aur slow ho jayega. Ise hum Curse of Dimensionality kehte hain. PCA (Principal Component Analysis) hamari madad karta hai un 100 features ko 2 ya 3 "Super Features" mein badalne mein bina important info khoye.

1. The Core Idea: Information Projection

PCA data ko delete nahi karta, balki use "Squeeze" (Compress) karta hai.

- Principal Components (PCs): Ye naye features hain jo purane features ka mix mixture hain.

- PC1: Ismein poore data ki sabse zyada "Information" (Variance) hoti hai.

- PC2: Ye PC1 ke 90-degree par hota hai aur bachi-kuchi info rakhta hai.

2. Math behind PCA: Eigenvectors & Covariance

Aapko lag raha hoga ye magic kaise hota hai?

- Model pehle Covariance Matrix banata hai ye dekhne ke liye ki features aapas mein kitne jude hain.

- Phir wo Eigenvectors aur Eigenvalues nikalta hai.

- Eigenvector batata hai "Direction" (Rasta) aur Eigenvalue batata hai us raste ki "Importance".

3. Scree Plot: Kitne Components chunu?

Humein kaise pata chalega ki 100 mein se 2 components kaafi hain ya 10?

- Hum Scree Plot banate hain jo batata hai har component kitni variance (info) carry kar raha hai.

- Agar pehle 3 components 95% variance de rahe hain, toh baki 97 features ko hum chhod sakte hain.

4. PCA for Visualization

Insaan sirf 2D ya 3D dekh sakta hai.

- Agar aapko dekhna hai ki aapka 50-dimensional data kaisa dikhta hai (clusters hain ya nahi), toh PCA use 2D mein plot karne ka sabse accha tareeqa hai.

5. Summary Table: PCA vs t-SNE

| Feature | PCA | t-SNE |

|---|---|---|

| Type | Linear | Non-Linear |

| Speed | Super Fast | Slow |

| Preservation | Global structure (Spread) | Local structure (Clusters) |

| Use Case | Feature Reduction | Data Visualization |

FAQs

1. PCA se pehle Scaling kyon zaroori hai? Kyonki PCA "Variance" dhoondhta hai. Agar Salary (100000) aur Age (25) ko scale nahi kiya, toh PCA sochega Salary hi sabse important hai kyonki uska number bada hai.

2. "Explained Variance Ratio" kya hai? Ye wo number hai (e.g., 0.85) jo batata hai ki is specific component ne poore data ki kitni percentage information pakad li hai.

3. Kya PCA hamesha accuracy badhata hai? Nahi, hamesha nahi. Kabhi-kabhi info loss se accuracy gir sakti hai. Lekin ye model ko "Fast" aur "General" banata hai (Overfitting kam karta hai).

4. Kernel PCA kya hai? Standard PCA sirf seedhi lines (Linear) par kaam karta hai. Agar data "Spiral" ya "Circular" hai, toh hum Kernel PCA use karte hain.

PCA bade data ka "Microscope" hai. Ise samajh kar aap kisi bhi complex dataset ko simplify kar sakte hain! 📉

Tarun ke baare mein: Tarun dimensionality reduction aur matrix decomposition ke specialist hain. AI-Gyani par har projection sharp hai.