Aapne model ko 80% data par train kiya aur 20% par test. Accuracy aayi 90%. Kya ye sach hai? Shayad wo 20% data bahut aasaan tha? Is "Kismat" (Luck) ko hatane ke liye hum Cross-Validation use karte hain. Ye model ko data ke har ek kone (corner) par test karta hai.

1. K-Fold: Sabki Baari Aayegi

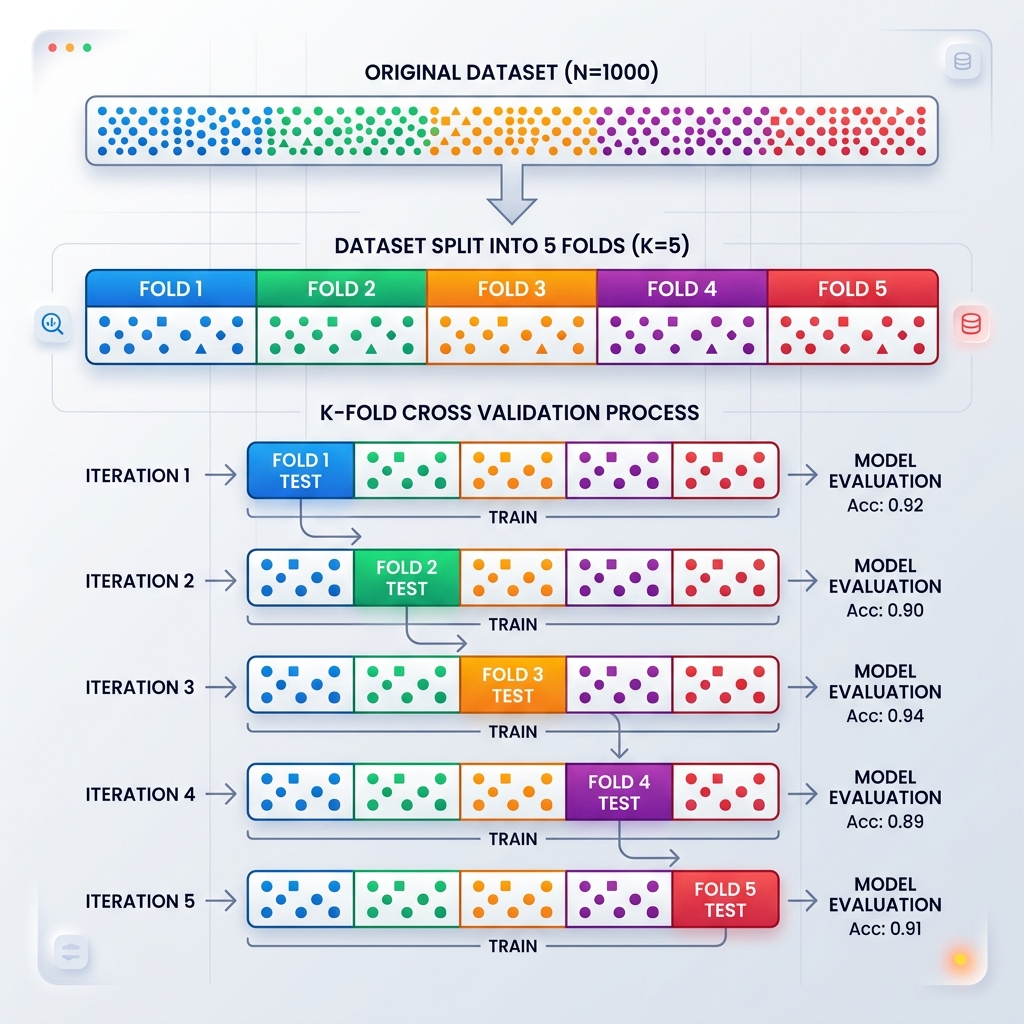

Sochiye data ke 5 tukde hain (K=5).

- Model 4 tukdon par seekhta hai aur 1 par test hota hai.

- Ye process 5 baar chalti hai, har baar ek naya tukda "Test" banta hai.

- Aakhir mein hum inka Average lete hain. Ye accuracy asli hoti hai!

2. Stratified K-Fold: Category Balance

Agar aapke paas 1000 normal transactions hain aur sirf 10 fraud hain.

- Normal K-fold mein ho sakta hai kisi fold mein ek bhi fraud na aaye.

- Stratified K-Fold insure karta hai ki har tukde mein Fraud aur Normal ka ratio wahi ho jo asli duniya mein hai.

3. Nested Cross-Validation: Unbiased Tuning

Ye 2026 ka professional standard hai.

- Inner Loop: Hyperparameters dhoondhne ke liye.

- Outer Loop: Model ki asli accuracy batane ke liye. Isse "Data Leakage" ka khatra zero ho jata hai aur model real-world mein fail nahi hota.

4. LOOCV: Leave-One-Out

Ye tab use hota hai jab hamare paas bahut kam data (e.g., medical trial of 50 people) ho.

- Model 49 logon se seekhta hai aur sirf 1 insaan par test hota hai.

- Ye bahut slow hai par bahut chote data ke liye sabse accurate hai.

5. Summary Table: CV Techniques Comparison

| Technique | When to use? | Key Benefit |

|---|---|---|

| Simple Split | Big Data (>1M) | Fast |

| K-Fold | Medium Data | Robust |

| Stratified | Imbalanced Data | Preserves labels ratio |

| Nested CV | Tuning + Testing | No bias |

FAQs

1. Kya 5-fold CV training ko 5x slow kar deta hai? Haan! Kyonki model 5 baar train hota hai. Isliye 100-fold CV kabhi mat karein, 5 ya 10 kaafi hai.

2. "Data Leakage" kaise rokain? Hamesha Pipelines use karein. Scaling aur Preprocessing har fold ke andar honi chahiye, poore data par pehle nahi.

3. Kya Cross-validation production mein kaam aata hai? Nahi, ye sirf training ke waqt "Best Model" chunne ke liye hai. Production mein hum poore data par ek final model train karke bhejte hain.

4. K=10 kyon best hai? Research ke hisab se 10-folds bias aur variance ke beech sabse accha balance dete hain.

Cross-validation aapke model ki "Agni Pareeksha" hai. Jo ismein pass ho gaya, wo real world mein kabhi fail nahi hoga! 🛡️

Tarun ke baare mein: Tarun validation protocols aur unbiased model testing ke specialist hain. AI-Gyani par har accuracy verify-hi-verify hai.