Aapne Midjourney ya DALL-E se images banayi hongi, lekin kya aapne notice kiya ki wo image ek dum se nahi banti? Pehle screen par kuch "Dhudhla" (Noise) dikhta hai aur phir dhire-dhire wo ek saaf photo ban jati hai. Ye process hi Diffusion hai. Ye technology aaj AI image generation ki "King" hai.

1. Core Logic: Forward vs Reverse Diffusion

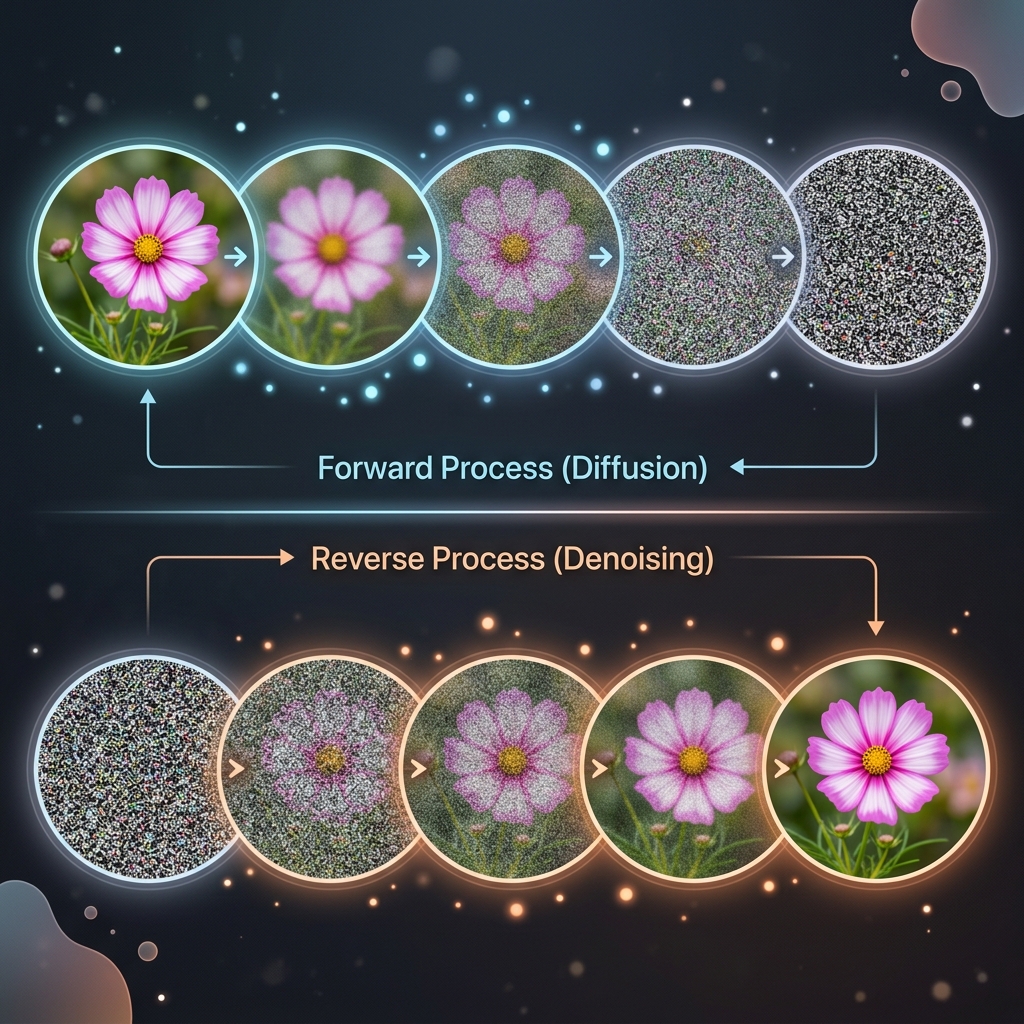

Diffusion model ek simple philosophy par chalta hai:

- Forward Diffusion: Hum ek saaf photo mein tab tak "Noise" (Kachra) milate hain jab tak wo pehchani na jaye. Imagine kijiye aapne "Biryani" ki photo mein dher sara "Namak" (Noise) daal diya.

- Reverse Diffusion: AI seekhta hai ki us kachre ko "Saaf" kaise karna hai. Wo har step par thoda sa kachra hatata hai jab tak asli photo wapas na aa jaye.

2. U-Net Architecture: The Noise Predictor

Is poori process ka dil hai U-Net.

- U-Net ek neural network hai jo image ko compress karta hai aur phir wapas expand karta hai.

- Iska kaam sirf ek hai: "Predict the noise". Ye bataata hai ki image mein kaunsa pixel "Faltu" hai aur use hatana chahiye.

- Ye "Safai Abhiyan" tab tak chalta hai jab tak AI ko "Signal" (Asli data) mil na jaye.

3. CLIP: The Bridge between Words and Pixels

AI ko kaise pata chalta hai ki use "Kutta" banana hai ya "Billi"?

- CLIP (Contrastive Language-Image Pre-training): Ye wo model hai jo Text aur Images ke beech ka "Bridge" hai.

- Ye prompt ko vectors mein badalta hai aur Diffusion model ko guide karta hai ki "Kachra saaf karte waqt, dhayan rahe ki result Ek Kutta dikhna chahiye".

4. Why Diffusion is better than GANs?

Pehle hum GANs use karte the, par ab Diffusion King hai kyonki:

- Diversity: Diffusion models hazaaron alag-alag styles ki images bana sakte hain.

- Stability: Ye train karna asaan hai aur inmein "Mode Collapse" (ek hi jaisi image baar-baar banana) ki problem nahi aati.

- Control: Aap exact prompt se result control kar sakte hain.

5. Summary Table: Diffusion Ecosystem

| Component | What it does? | Why? |

|---|---|---|

| Scheduler | Adds/Removes noise | Controls the speed of generation |

| U-Net | Predicts noise | The actual "Brain" of the model |

| Latent Space | Compresses image | Makes it run on normal laptops |

| Guidance Scale | Follows prompt | High = Strict, Low = Creative |

FAQs

1. "Steps" badhane se image better hoti hai? Ek limit tak haan. 20-50 steps general use ke liye best hain. 500 steps badhane se image change hona band ho jati hai par time bahut lagta hai.

2. Stable Diffusion local PC par kaise chalta hai? Ye "Latent Diffusion" use karta hai. Iska matlab hai ye images ko pixels par nahi, balki ek compressed math space mein process karta hai, jo memory bahut kam leta hai.

3. "Negative Prompt" kya hai? AI ko ye batana ki use kya NAHI banana hai. (e.g., "No blurred faces", "No extra legs").

4. 2026 mein Diffusion ka future? Ab hum Video Diffusion (Sora) aur 3D Diffusion dekh rahe hain, jahan text se poori movie ya 3D game models minto mein ban rahe hain.

Diffusion models AI ki "Creative Brush" hain. Inhone machines ko khayalon ko haqiqat mein badalna sikhaya hai! 🎨

Tarun ke baare mein: Tarun image generation architectures aur noise scheduling optimization ke specialist hain. AI-Gyani par har pixel mathematical perfection hai.