Jab hum "Neural Network" shabd sunte hain, toh dimaag mein wires ka ek jaal banta hai. Par asaliyat mein, ye sirf Mathematics hai jo insaani dimaag ke neurons ki tarah signal pass karta hai. Ek neural network hazaron chote "Cells" se bana hota hai jinhe hum Neurons kehte hain. Inhein hum AI ka "Brain cells" bhi keh sakte hain.

1. Biological vs Artificial: Dimaag ki nakal

Insaani dimaag mein 100 Billion neurons hote hain jo bijli ke jhatkon (signals) se ek dusre se baat karte hain.

- Biological: Dendrite (Input) -> Cell Body (Processor) -> Axon (Output).

- Artificial (ANN): Input $x$ -> Weight $w$ (Importance) -> Activation Function (Logic) -> Output $y$. Dono ka goal ek hi hai: "Pichli jankari se naya pattern pehchanna."

2. Anatomy of a Neuron: Weights & Biases

Har artificial neuron ek math machine hai:

- Weights (w): Ye batate hain ki kaunsa input kitna zaroori hai. (e.g. Price predict karte waqt "Area" ka weight zyada hoga "Paint color" se).

- Bias (b): Ye neuron ko flexibility deta hai. Ise radio ke "Tune" button ki tarah samjhein jo model ko shift karne mein madad karta hai.

- Summation: Sabko milakar ek score banta hai: $z = w_1 \cdot x_1 + w_2 \cdot x_2 + b$.

3. Universal Approximation Theorem: The Magic

Ye Neural Networks ki sabse powerful property hai.

- The Concept: Ye theorem kehti hai ki ek neural network duniya ka koi bhi complex mathematical function seekh sakta hai, chahe wo kitna bhi tedha (non-linear) kyon na ho.

- Isliye Neural Networks face recognition se lekar language translation tak sab kuch kar pate hain.

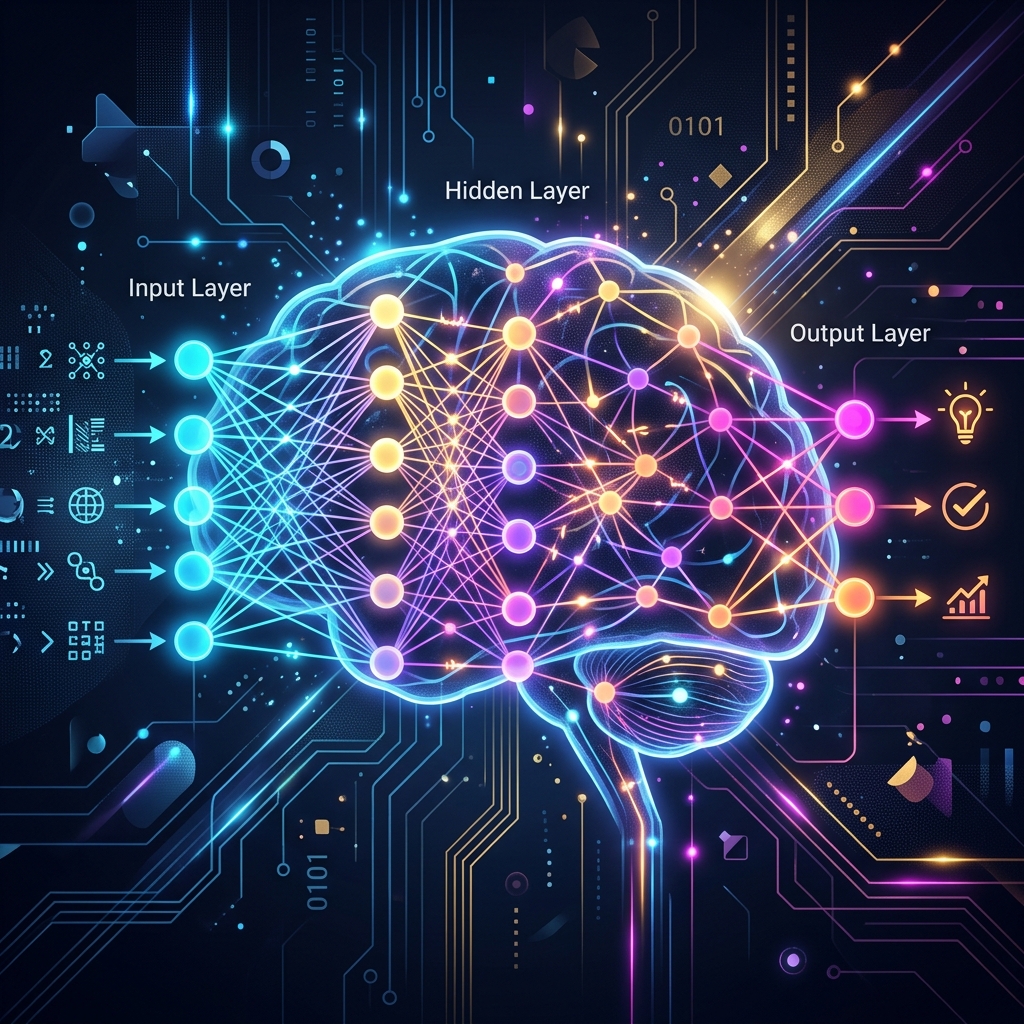

4. Multi-Layer Perceptron (MLP): Layers ka jaal

Ek neuron akela kuch nahi kar sakta. Isliye hum unhein layers mein jodte hain:

- Input Layer: Raw data (Pixels ya Words).

- Hidden Layers: Asli "Thinking" yahan hoti hai. Har layer data mein se ek naya level dhoondhti hai (e.g. pehli layer lines, dusri layer aankhein, teesri layer chehra).

- Output Layer: Final guess (e.g. "Ye Tarun hai").

5. Summary Table: Neural Network Components

| Part | Role | Analogy |

|---|---|---|

| Neuron | Processing Unit | Brain cell |

| Weight | Importance scale | Volume knob |

| Activation | Non-linear trigger | The 'Aha!' moment |

| Bias | Shift adjust | Fine-tuning knob |

| Layer | Depth of thinking | Level of understanding |

FAQs

1. Weights shuruat mein kya hote hain? Weights hamesha Random (Chote numbers) se shuru hote hain. Agar sab zero honge, toh neural network kabhi signal pass hi nahi kar payega. Ise "Symmetry Breaking" kehte hain.

2. "Deep" Learning kyon kehte hain? Kyonki ismein Hidden Layers ki ginti bahut zyada hoti hai. Agar network mein 100-200 layers hon, toh wo bahut "Gehraai" (Deep) se patterns pehchan sakta hai.

3. "Backpropagation" kya hai? Ye model ka "Learning Mechanism" hai. Jab model galti karta hai, toh calculus (Chain Rule) use karke wo piche jata hai aur har weight ko thoda sa badal deta hai.

4. 2026 mein Neural Networks ka trend? Ab hum Sparse Neural Networks use kar rahe hain, jo sirf zaroori neurons ko hi activate karte hain taaki bijli aur computing power kam lage.

Neural Networks AI ka "Heartbeat" hain. Inhein samajh liya toh aapne AI ki asli rooh ko samajh liya! 🧠

Tarun ke baare mein: Tarun mathematical neural modeling aur biological inspiration learning systems ke specialist hain. AI-Gyani par har neuron logical aur efficient hai.