Pehle ke AI models "Andhe" aur "Behre" the — wo sirf text padh sakte the. Lekin aaj ka AI ek saath photo dekh sakta hai, awaaz sun sakta hai aur text likh sakta hai. Ise Multi-modal AI kehte hain. GPT-4o aur Gemini 1.5 iska sabse bada example hain. Ye machine ko insaani senses ke aur bhi kareeb le jata hai.

1. What is Multimodality?

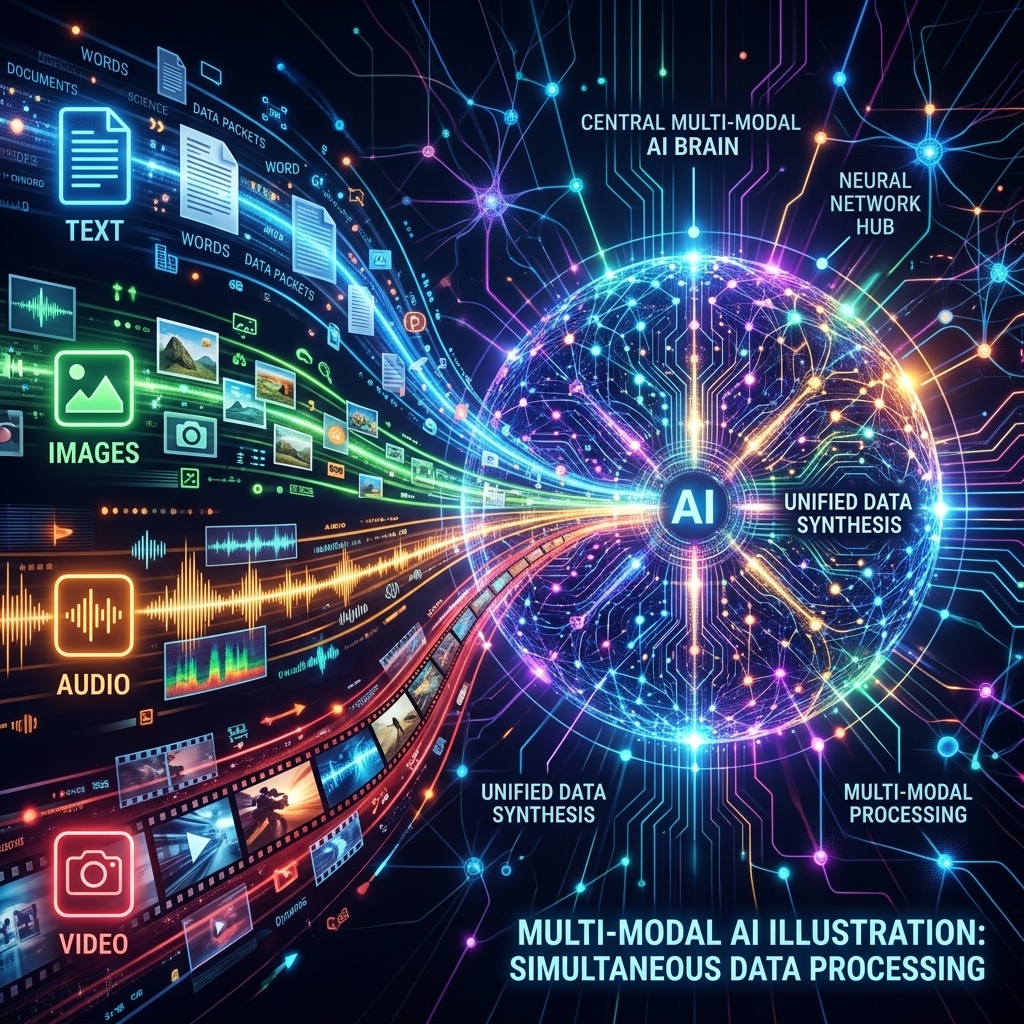

Human senses ki tarah, AI ab multiple types of data (Modalities) handle karta hai:

- Input: Text, Images, Audio, Video.

- Output: Text, Speech, Image, ya Action (Robot move karna). Ise hum "Omni-channel Intelligence" bhi keh sakte hain, jahan AI ko farq nahi padta ki jankari awaaz mein aayi hai ya photo mein.

2. The Logic: Joint Embedding Space

AI photo ko kaise "Padhta" hai?

- CLIP (Connecting Modalities): Ye wo "Common Language" hai jisme Text aur Images ke vectors ek saath rehte hain.

- Is space mein "Apple" (Word) aur "Apple" (Photo) ek hi coordinates par hote hain.

- Isliye jab aap AI ko photo dikhate hain, wo turant samajh jata hai ki text mein iska matlab kya hai.

3. Native Multimodality vs Wrapper

- Wrapper (Puranazama): Pehle hum image ko text mein badalte the (Captioning) aur phir AI ko dete the. Ismein tone aur emotion "Loss" ho jata tha.

- Native (Standard 2026): Ab models (jaise GPT-4o) images aur audio ko "Direct" samajhte hain. Inke dimaag (Weights) mein text, pixel, aur audio ek saath train hote hain. Ise Native Multimodality kehte hain, jo 10x fast aur accurate hai.

4. Spacetime Patches: Video Generation (Sora)

Sora jaise models video kaise banate hain?

- Wo video ko "Spacetime Patches" (Tukdon) mein todte hain.

- Ye patches bilkul text ke "Tokens" ki tarah hote hain.

- AI in patches ko predict karta hai taaki video mein continuity rahe aur physics (jaise gravity) sahi dikhe.

5. Summary Table: Multimodal Comparison

| Modality | Processor | Output Logic |

|---|---|---|

| Text | Transformer | Meaning prediction |

| Image | ViT (Vision Transformer) | Spatial relationship |

| Audio | Spectrograms | Tonal & Pitch analysis |

| Video | 3D Transformers | Temporal (Time) flow |

FAQs

1. "Late Fusion" aur "Early Fusion" mein kya fark hai?

- Late Fusion: Alag-alag models use karna aur aakhiri mein result jhodna.

- Early Fusion: Shuruat se hi text aur image ko milakar train karna. Native models Early Fusion use karte hain.

2. Kya Multi-modal AI mehnga hai? Haan! Image aur video processing mein text se 100x zyada compute power lagti hai. Isliye multimodal APIs thodi costly hoti hain.

3. "Vision-Language Gap" kya hota hai? Kabhi-kabhi AI photo dekh toh leta hai par choti details (jaise background mein likha text) bhool jata hai. Ise resolve karne ke liye "High-resolution encoders" use hote hain.

4. 2026 mein future kya hai? Ab hum Action-Oriented Multimodality dekh rahe hain, jahan AI aapka computer screen dekh kar khud buttons click karta hai (Agentic UI).

Multi-modal AI machine ko "Insaani Senses" deta hai. Jab AI dekhne aur sunne lagta hai, toh wo sirf ek program nahi, balki ek sathi ban jata hai! 🌐

Tarun ke baare mein: Tarun cross-modal data alignment aur unified representation learning ke specialist hain. AI-Gyani par har sense optimized hai.