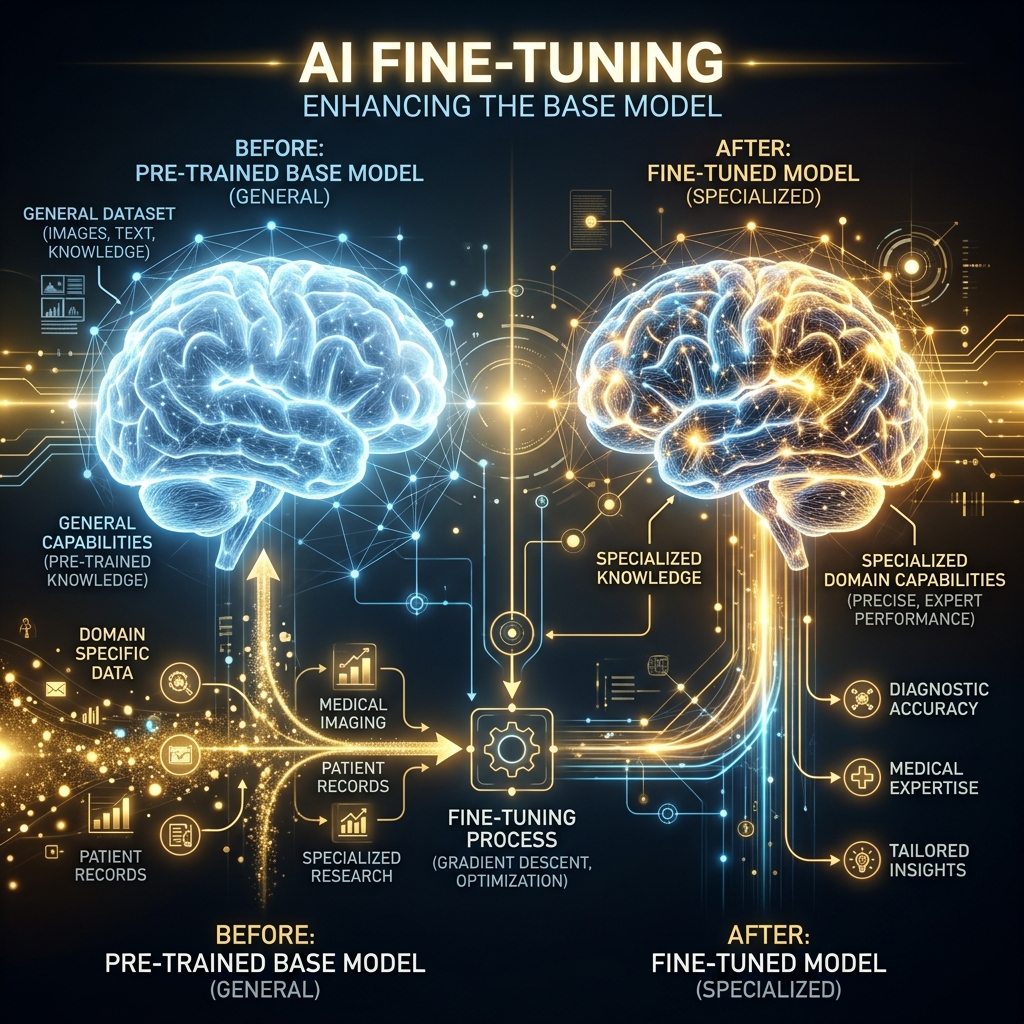

Aapne Llama-3 ya GPT-4 use kiya hoga. Ye "All-rounders" hain, par inhein aapki company ki policy ya aapke doctor ke style ka pata nahi hota. Ise hum Fine-tuning se theek karte hain. Ise model ki "Specialization" samjhein — jaise ek MBBS doctor ko "Cardiologist" banana.

1. Fine-tuning vs RAG: Confuse na hon!

- RAG (Retrieval Augmented Generation): AI ko ek "Kitaab" (External Knowledge) pakda dena. Ye asaan aur sasta hai.

- Fine-tuning: AI ke "Dimaag" (Weights) ko hi badal dena taaki wo ek naye "Style" ya "Behavior" mein bole. 2026 mein dono ka mix use hota hai.

2. LoRA: Low-Rank Adaptation (Budget Friendly)

Bade models (70 Billion parameters) ko train karna namumkin tha. Phir aaya LoRA.

- Ye model ke main weights ko touch nahi karta.

- Ye model ke saath 2 choti "Side-matrices" add kar deta hai.

- Sirf in choti matrices ko train karne se model naya kaam seekh jata hai. Isse GPU kharcha 90% kam ho jata hai.

3. QLoRA: Laptop par Fine-tuning

LoRA ke baad aaya QLoRA.

- Ye model ko "Quantize" (4-bit mein nichodna) kar deta hai.

- Ab aap Llama-3 jaise bade model ko ek normal gaming laptop (12GB VRAM) par fine-tune kar sakte hain. 2026 mein har developer apna "Personal AI" aise hi bana raha hai.

4. Fine-tuning Pipeline: Step-by-Step

- Dataset Creation: "Question-Answer" pairs ka JSON data banayein.

- Formatting: Data ko model ki bhasha (e.g., Alpaca or ChatML) mein format karein.

- Training: Google Colab ya AWS par LoRA weights train karein.

- Merging: In naye weights ko asli model ke saath "Merge" kar dein.

5. Summary Table: Fine-tuning Comparison

| Method | VRAM Required | Performance | Best For |

|---|---|---|---|

| Full FT | 500GB+ | 100% | Research / Huge Labs |

| LoRA | 24GB - 48GB | 98% | Startups / Companies |

| QLoRA | 8GB - 12GB | 95% | Individual Developers |

FAQs

1. "Catastrophic Forgetting" kya hota hai? Jab model naya kaam (Legal advice) seekhte waqt purani baatein (Coding) bhool jaye. Ise rokne ke liye hum LoRA aur balanced data use karte hain.

2. Kya mujhe fine-tuning ke liye millions of rows chahiye? Nahi! Sirf style change karne ke liye 100-500 acche examples kaafi hain.

3. "PEFT" kya hai? PEFT = Parameter Efficient Fine-Tuning. LoRA aur QLoRA isi category mein aate hain kyonki ye poore model ke bajaye sirf 1% parameters train karte hain.

4. ChatGPT fine-tune kaise hota hai? OpenAI apne API par simple "File upload" facility deta hai. Unka background system apne aap fine-tuning handle kar leta hai, aapko sirf dataset dena hota hai.

Fine-tuning AI ko "Aapka Apna" banati hai. Ise master karke aap kisi bhi AI ko kisi bhi role mein dhaal sakte hain! 🛠️

Tarun ke baare mein: Tarun parameter-efficient tuning aur adapter-based LLM customization ke specialist hain. AI-Gyani par har model fine-tuned aur specialist hai.