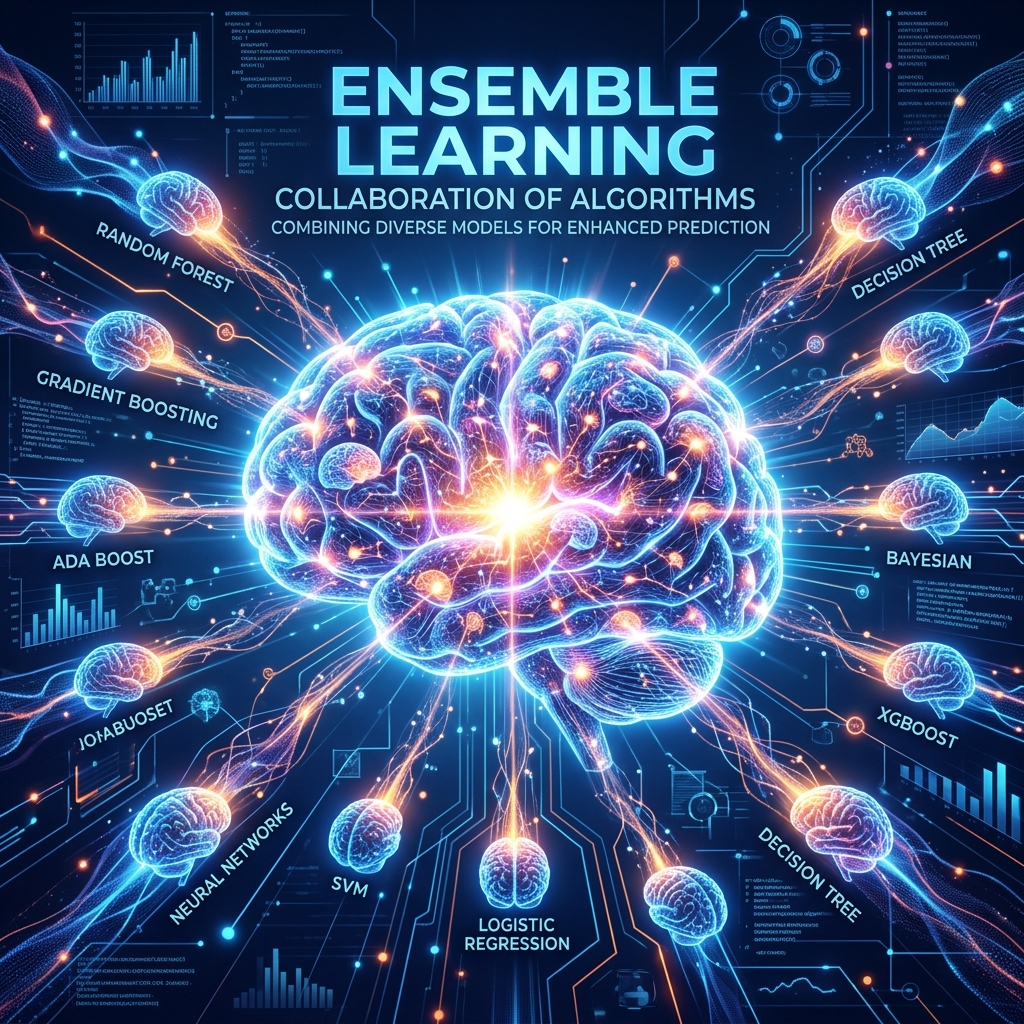

Aapne wo kahawat suni hogi: "Ek se bhale do." Machine Learning mein isi concept ko hum Ensemble Learning kehte hain. Ek akela model galti kar sakta hai, lekin jab hum bahut saare models ko milakar unka "Average" ya "Voting" lete hain, toh humein milta hai ek Super Model.

1. Bagging (Bootstrap Aggregating)

Bagging ka main kaam hai — Overfitting kam karna.

- Random Forest iska king hai.

- Ye saare models ko "Parallel" train karta hai. Har model data ka alag tukda dekhta hai aur aakhir mein sab milkar "Vote" karte hain.

2. Boosting: Seekh par Seekh

Boosting ka kaam hai — Accuracy badhana.

- Ye models ko "Sequential" (Ek ke baad ek) train karta hai.

- Pehla model galti karta hai, dusra model sirf unhi galtiyon ko theek karne ki koshish karta hai.

- XGBoost, LightGBM, aur CatBoost isi logic par chalte hain aur Kaggle competitions jeet-te hain.

3. Stacking: Level-up Strategy

Stacking mein hum models ke results ko "Average" nahi karte, balki unhein ek aur "Meta-Model" mein daalte hain.

- Maan lijiye 3 doston ne alag-alag phones suggest kiye.

- Stacking mein ek chautha "Smart Dost" (Meta-model) ye decide karega ki kis dost ki baat kab manni hai.

4. Weak Learners: Stumps ka power

Boosting mein hum aksar bahut chote ped (Stumps) use karte hain.

- Inhein Weak Learners kehte hain.

- Ek akela stump bekar hai, par jab hazaron stumps ek saath aate hain, toh wo duniya ka sabse powerful model banate hain.

5. Summary Table: Bagging vs Boosting

| Feature | Bagging | Boosting |

|---|---|---|

| Workflow | Parallel (Sab saath mein) | Sequential (Ek ke baad ek) |

| Main Goal | Reduces Variance (Overfitting) | Reduces Bias (Underfitting) |

| Example | Random Forest | XGBoost, AdaBoost |

| Complexity | Simple to tune | Hard to tune |

FAQs

1. "Learning Rate" boosting mein kyon zaroori hai? Kyonki boosting sequential hai, agar learning rate bahut bada hua toh model agle step mein "Pichli galtiyon" ko theek karne ke bajaye unhe aur bigad dega.

2. Stacking kab use karein? Sirf tab jab aapko 0.1% accuracy badhani ho (jaise competitions mein). Real industry projects mein ye model ko bahut "Heavy" aur slow bana deta hai.

3. XGBoost itna fast kyon hai? Kyonki ye parallel processing aur hardware optimization use karta hai. Iska math itna efficient hai ki ye millions of rows ko minto mein process kar sakta hai.

4. Kya Ensemble Learning humesha better hota hai? Haan, accuracy ke maamle mein ye single model se behtar hota hai. Lekin iska nuksan ye hai ki ye "Black Box" hota hai — aap asani se ye nahi samjha sakte ki decision kyon liya gaya.

Ensemble Learning ML ka "Team Work" hai. Jab models milkar kaam karte hain, toh namumkin prediction bhi mumkin ho jati hai! 🚀

Tarun ke baare mein: Tarun stacking strategies aur boosting algorithms ke specialist hain. AI-Gyani par har ensemble winning-grade hai.