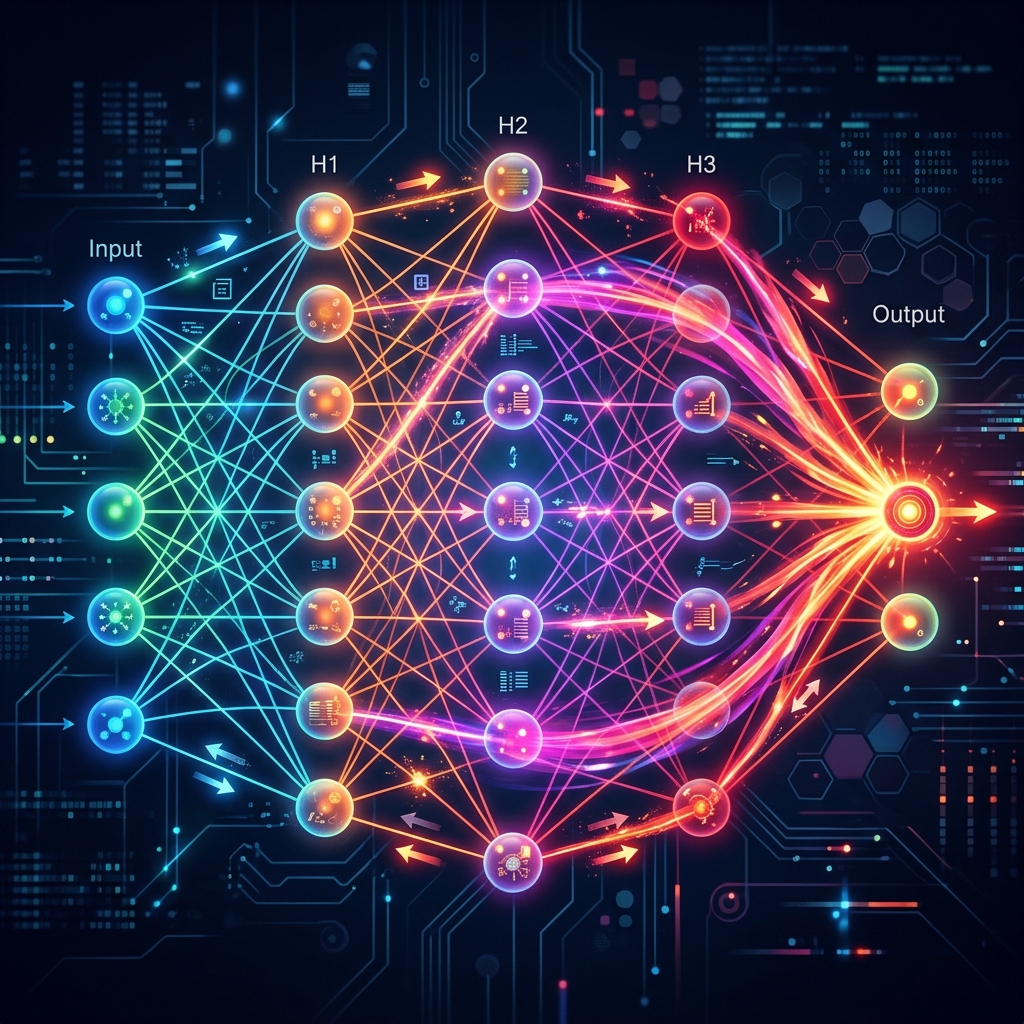

Agar aap mujhse puchein: "AI ki sabse badi khoj kya hai?" — Mera jawab hoga Backpropagation. Bina iske, Neural Networks sirf dher saare random numbers hote. Ye wo jadui process hai jo galti (Loss) se seekhti hai aur model ko dhire-dhire intelligent banati hai.

1. The Two-Way Street: Forward & Backward

- Forward Pass: Model input leta hai aur ek guess (Prediction) karta hai. Ye shuruat mein aksar galat hota hai.

- Backward Pass (Backprop): Hum galti (Error) ko piche ki taraf bhejte hain. Har layer ko bataya jata hai: "Tune itni galti ki, apna weight itna badal lo."

2. Chain Rule: The Calculus Engine

Backpropagation ke piche math ka Chain Rule hai.

- Imagine kijiye ek factory line mein 5 log hain. Aakhiri bande ne product kharab kiya.

- Hum piche jayenge: 5th se puchenge, phir 4th se, phir 3rd se... aur sabki galti (Derivative) nikaalenge.

- Math mein: $\frac{dLoss}{dWeight} = \frac{dLoss}{dOutput} \cdot \frac{dOutput}{dHidden} \cdot \frac{dHidden}{dWeight}$

3. The Loss Landscape

Model ek andheri pahaadi par hai aur use sabse geheri khaayi (Zero Error) dhoondhni hai.

- Gradients: Ye humein batate hain ki "Agla kadam kahan rakhna hai".

- Agar gradient positive hai, toh weight kam karo. Agar negative hai, toh weight badhao.

4. Why GPUs?

Backpropagation mein har layer ke liye calculus solve karna hota hai. Ek model mein millions of weights hote hain.

- CPU ye kaam ek-ek karke karta hai (Slow).

- GPU hazaron calculus equations ek saath (Parallel) solve karta hai. Isliye DL ke liye GPU mandatory hai.

5. Summary Table: Backprop Cycle

| Step | Action | Math Logic |

|---|---|---|

| Forward | Prediction | Matrix Multiplication |

| Loss | Compare with Label | Mean Squared Error / Cross Entropy |

| Backward | Feedback | Chain Rule (Derivatives) |

| Update | Fix Weights | $W = W - LR \cdot Gradient$ |

FAQs

1. "Exploding Gradient" kya hota hai? Jab derivatives itne bade ho jayein ki weights "Infinite" ho jayein aur model crash ho jaye. Ise "Gradient Clipping" se theek karte hain.

2. Kya Backpropagation insaani dimaag mein hota hai? Research kehti hai ki hamara dimaag thoda alag tareeke se seekhta hai (Hebbian learning), par Backpropagation abhi tak ka sabse best "Mathematical replica" hai learning ka.

3. "Epoch" aur "Iteration" mein kya fark hai? Iteration matlab ek batch (e.g. 32 photos) par Backprop karna. Epoch matlab jab poora data (e.g. 1000 photos) ek baar khatam ho jaye.

4. Kya mujhe backprop code karna hoga?

Nahi! PyTorch mein sirf loss.backward() likhne se ye poora math piche apne aap solve ho jata hai.

Backpropagation AI ka "Teacher-Student" relation hai. Galti karo, piche jao, aur sudharo! 🔄

Tarun ke baare mein: Tarun mathematical learning theory aur gradient flow optimization ke specialist hain. AI-Gyani par har backward step ek forward progress hai.