2015 mein Google Photos app ne kuch Black users ki photos ko "Gorillas" tag kar diya. Google sharminda hua, maafi maangi, aur feature band kiya. Lekin ye event ek bahut badi problem ki taraf point karta hai — AI Bias.

Ye accident nahi tha. Ye tha training data ka result — jisme mostly white faces thi, aur darker skin tones underrepresented thi.

Aaj hum samjhenge ki AI Bias exactly kya hota hai, kahan se aata hai, aur isse kaise rokein.

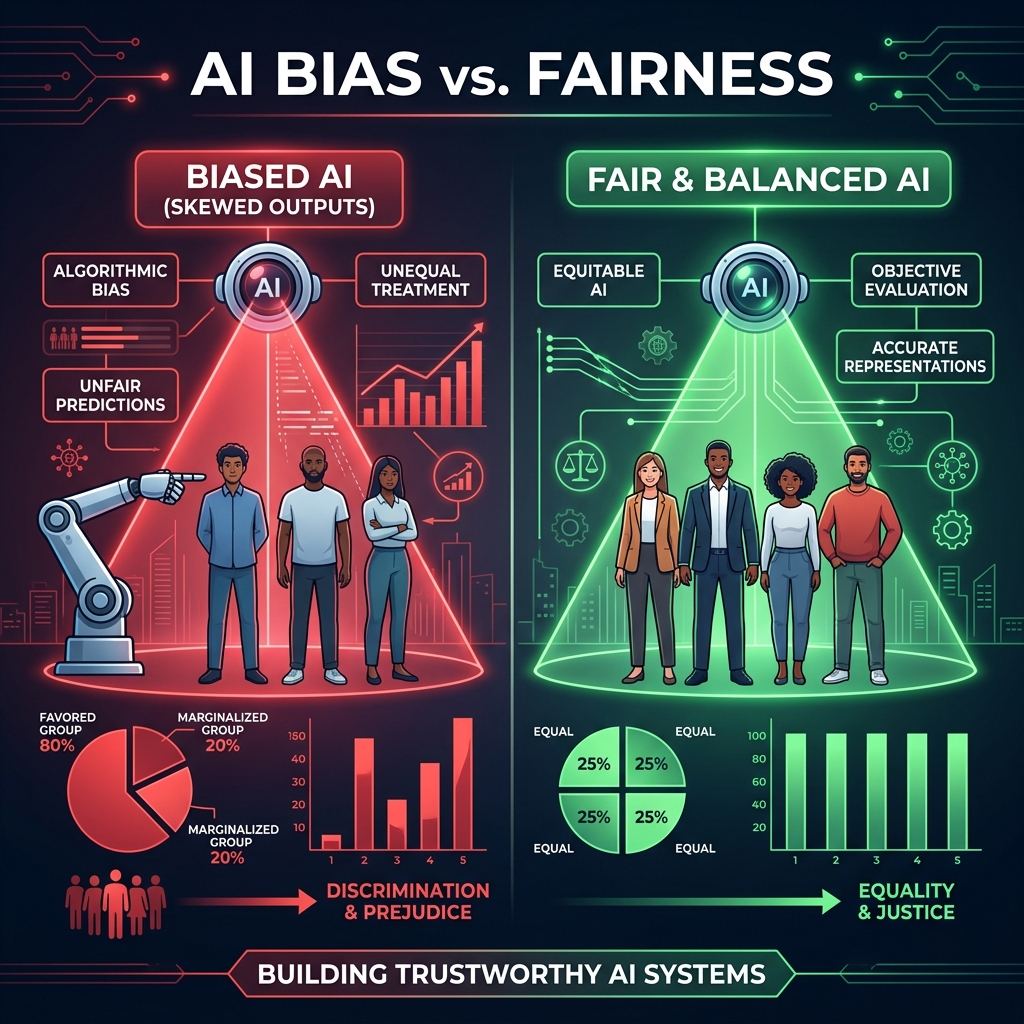

AI Bias kya hota hai?

AI Bias tab hota hai jab ek AI system kuch specific groups ya categories ke liye unfair, inaccurate, ya discriminatory results deta hai.

Ye intentional nahi hota — usually. Lekin unintentional bias bhi bahut harmful ho sakta hai.

Sochiye ek AI judge jo bail decide karta hai. Agar woh AI historically biased data pe train hua hai — kuch communities ke saath ahistorically zyada strict raha hai — toh AI bhi wahi pattern follow karega. Ek galat AI decision kisi ki zindagi kharaab kar sakta hai.

AI Bias kahan se aata hai?

1. Biased Training Data

Sabse common cause. Agar data mein already inequality hai, AI usse seekh leta hai.

Example: Ek facial recognition system sirf light-skinned faces pe train hua. Dark-skinned faces pe accuracy? Sirf 35% — vs 99% for light-skinned. (MIT Media Lab study, Joy Buolamwini)

2. Historical Bias

Agar historical decisions biased the — aur aap usi data pe train karo — AI history repeat karega.

Example: Amazon ka hiring AI 10 saalon ka historical hiring data dekh ke seekha. Kyunki historically zyada men hire hue the, AI women applications ko automatically downgrade karne laga.

3. Sampling Bias

Agar training data mein sab demographics represent nahi hain, model un groups ke liye poorly perform karega.

Example: Ek medical AI mostly western patients ke data pe train hua. Indian ya African patients pe uski accuracy bahut kam thi.

4. Confirmation Bias

Agar model designers ke apne assumptions hain, woh unconsciously model mein wahi assumptions daal dete hain.

5. Label Bias

Jab humans data ko label karte hain, unke biases bhi label mein aa jaate hain.

Example: "Professional haircut" label karwate waqt labelers ne mostly straight hair choose kiya — afro ya curly hair ko less professional mana.

Bias ke Real-World Consequences

Criminal Justice

USA mein COMPAS software bail decisions recommend karta tha. Research mein paaya gaya ki Black defendants ko white defendants se do guna zyada "high risk" predict kiya jaata tha — chahe future crimes same rate pe hon.

Healthcare

Ek study mein paaya gaya ki US hospitals ka algorithm Black patients ko less care recommend karta tha — not because of race directly — but because of healthcare spending data, jo historically unequal tha.

Job Recruitment

Multiple studies mein companies ke AI hiring tools ne identical resumes pe better scores diye jab "white-sounding" names the vs "Black-sounding" names.

AI Fairness kaise ensure karein?

1. Diverse Data Collect Karo

Training data mein saari demographics, genders, regions represent honi chahiye. Sirf ek type ka data dangerous hai.

2. Bias Testing karo

Model deploy karne se pehle test karo:

# Sklearn me fairness check

from sklearn.metrics import classification_report

# Different groups ke liye alag reports dekho

print(classification_report(y_test_group_A, y_pred_group_A))

print(classification_report(y_test_group_B, y_pred_group_B))

# Kya accuracy same hai dono ke liye?

3. Fairness Metrics use karo

- Demographic Parity: Kya predictions sabhi groups mein equal rate pe hain?

- Equal Opportunity: Kya true positive rates equal hain?

- Calibration: Kya probabilities across groups accurate hain?

4. Explainability add karo

Agar AI ka decision explain nahi ho sakta — toh bias detect karna mushkil ho jaata hai.

5. Diverse Teams banao

Agar sirf ek type ke log AI banate hain, toh woh sirf unhi ke perspective se sochenge. Diverse team automatically zyada perspectives consider karti hai.

Tools jo Bias Detect karne mein Help Karte Hain

| Tool | Company | Use Case |

|---|---|---|

| Fairlearn | Microsoft | ML model fairness assessment |

| AI Fairness 360 (AIF360) | IBM | Comprehensive bias detection |

| What-If Tool | Visual exploration of ML models | |

| Lime / SHAP | Open Source | Model explainability |

FAQs

1. Kya AI bias completely remove ho sakta hai?

Complete removal mushkil hai, lekin significantly reduce kiya ja sakta hai with diverse data aur proper testing.

2. Kya bias hamesha discrimination ke barabar hai?

Nahi — lekin agar bias real-world decisions mein use ho, toh woh discrimination ban sakta hai.

3. Mere small AI project mein bhi bias ki tension karni chahiye?

Haan! Good habits shuru se banao. Real users pe impact padta hai even small apps ka.

4. Kya AI bias ke liye laws hain?

EU AI Act mein high-risk AI systems ke liye bias testing mandatory hai. India mein abhi frameworks develop ho rahe hain.

Conclusion

AI Bias ek invisible problem hai — lekin iske effects bahut visible aur painful hote hain real insanon ke liye.

Responsible AI developer banna matlab hai: Data ko question karna, assumptions ko challenge karna, aur regularly check karna ki aapka AI sab ke saath fairly behave kar raha hai.

Agle post mein hum baat karenge — AI Security Risks — aapke AI systems pe kaunse cyber threats hain aur unse kaise bachein. 🛡️